I have written about this before. Several times, actually, and I done it with such an obsessive frequency that suggests either genuine intellectual concern or a personality disorder that has yet to be formally diagnosed. I personally think it’s a combination of both.

I wrote about how AI is actually displacing jobs, I built a risk tool so you could look up your own occupation and feel appropriately anxious about it over your morning coffee, I wrote about the fear-mongering economics of the AI apocalypse narrative, and then I kept writing because apparently I have no other hobbies and because the data kept being more interesting than the headlines.

Here is what I found, after all that research and all those words. And I am going to put it in between quotes. Here it comes. . .

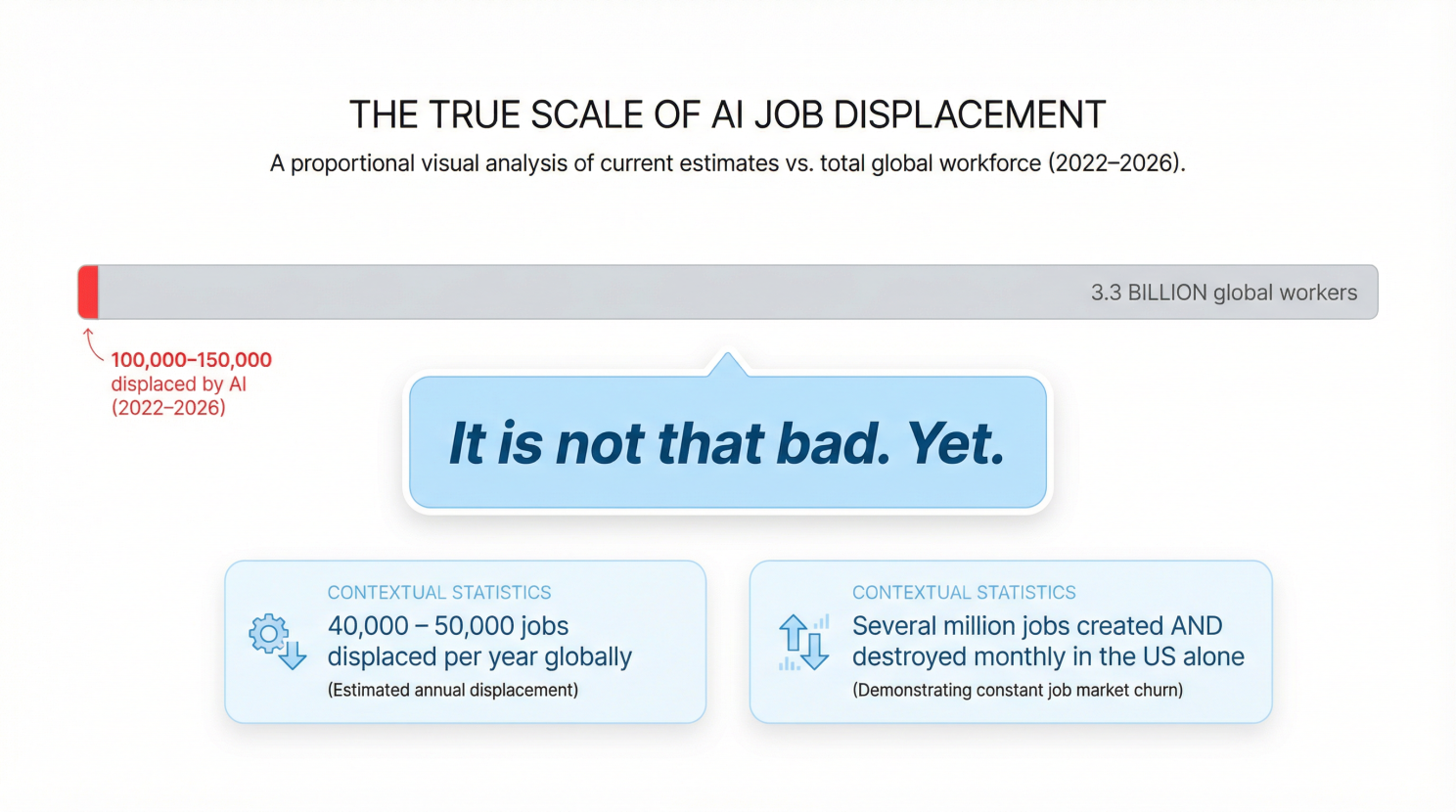

“It is not that bad. Yet.”

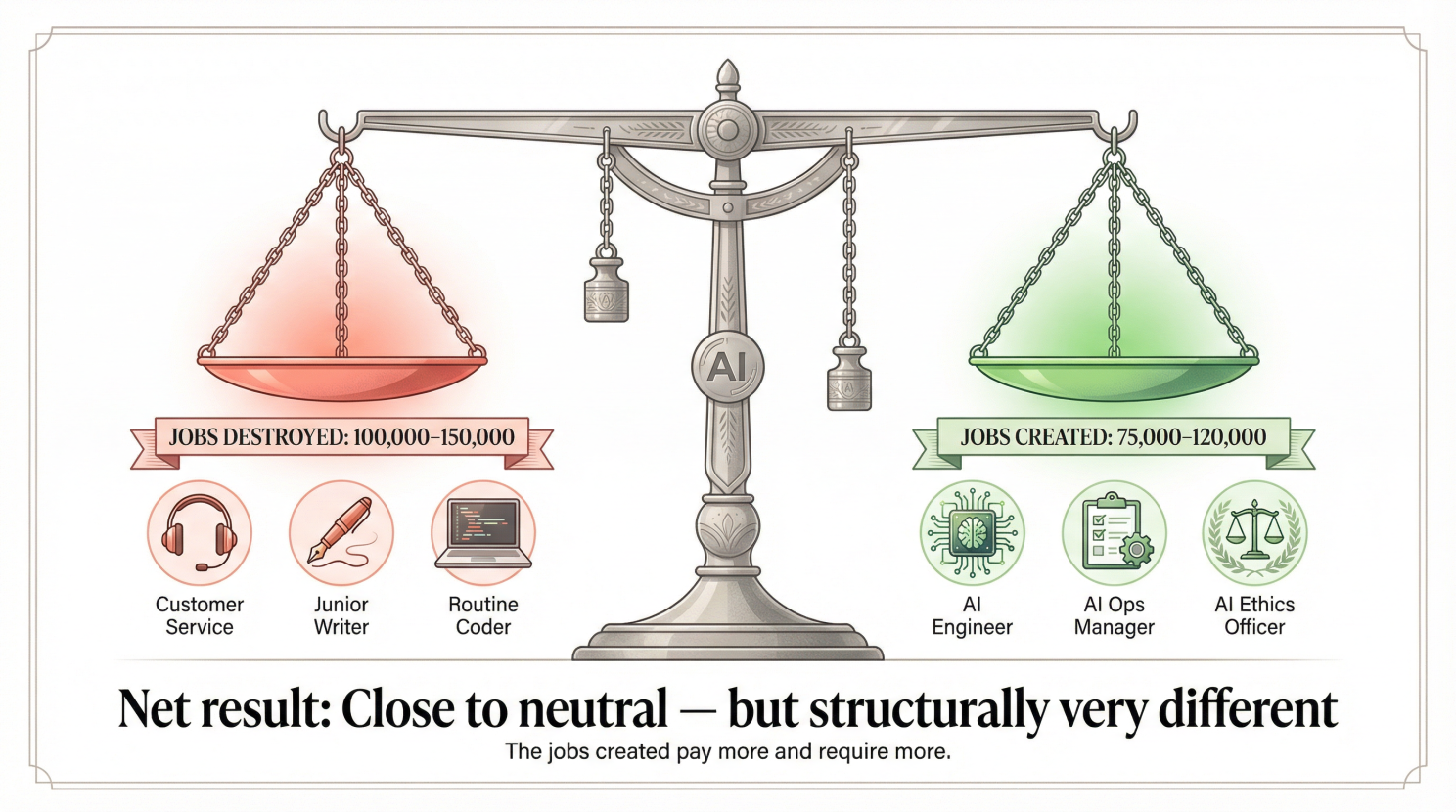

Let me be precise about what “not that bad” means, because I am a researcher and a teacher and I know that vague comfort is the enemy of useful understanding. Between November 2022 and April 2026, the cumulative number of jobs that can be directly, high-confidently attributed to AI displacement is somewhere between 100,000 and 150,000 globally, according to Challenger Gray & Christmas who are tracking data and a multi-source research study I commissioned myself (through my Oompa Loompas).

That number sounds enormous but you have to understand that, for instance, the United States economy alone creates and destroys several million jobs every single month through the perfectly normal process of businesses opening, closing, restructuring, and sometimes people making terrible decisions in leadership retreats in Scottsdale. One hundred and fifty thousand jobs over three and a half years, in a global economy with roughly 3.3 billion workers, is a rounding error. But unfortunately it has a very loud publicist.

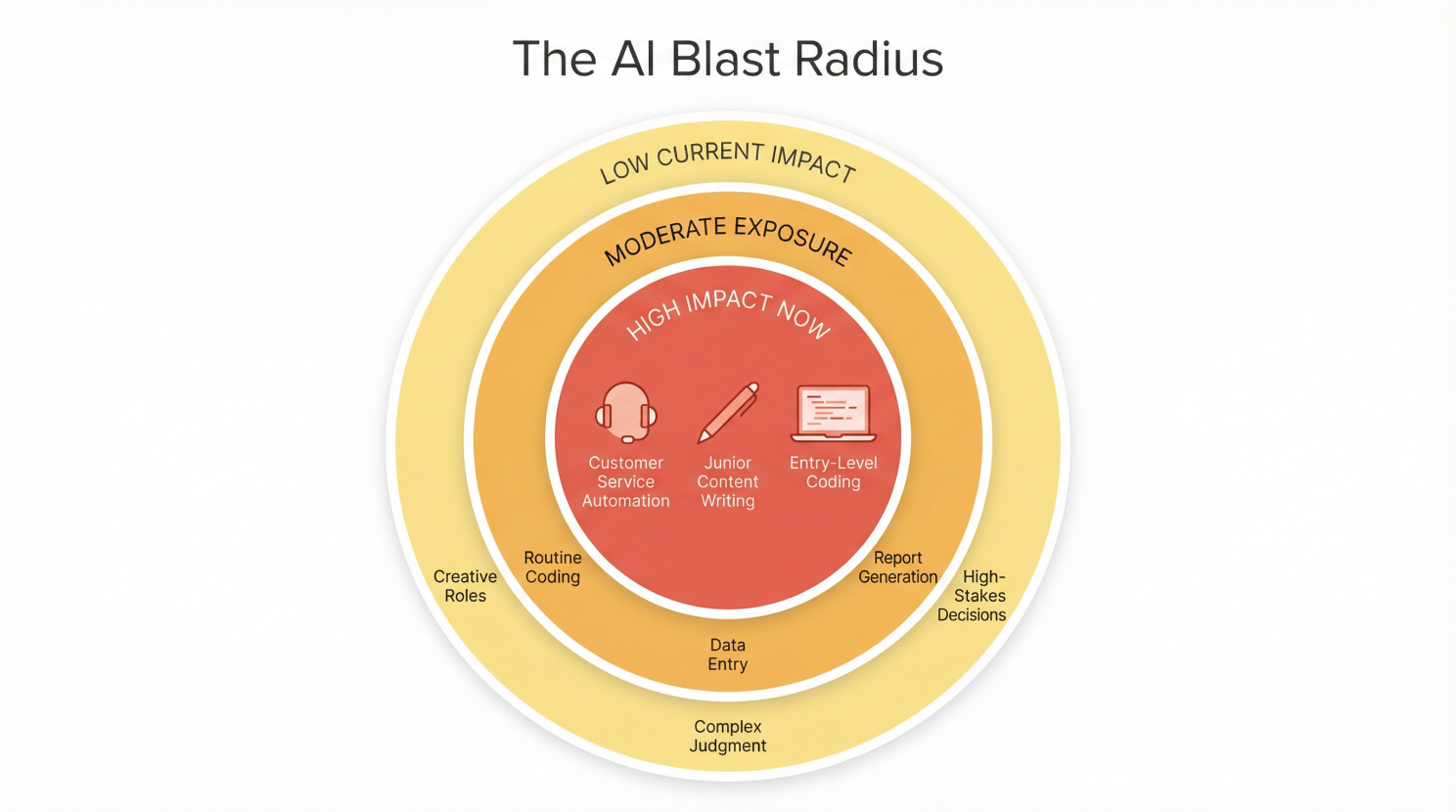

The actual sectors being hit are specific and concentrated, and we all know which sectors have been hit and why. I’m of course referring to customer service automation, junior content generation and consultancy, and entry-level software development.

That is it.

That, my friend, is the current blast radius. A customer service chatbot at Klarna replaces some agents and BuzzFeed deploys AI content tools and reduces editorial headcount.

A Harvard Business School working paper by Professor Suraj Srinivasan, analyzed nearly all U.S. job postings from 2019 through March 2025, and found that postings for structured and repetitive roles fell 13% after ChatGPT’s launch. Also the demand for analytical, technical, and creative roles grew 20% in that same period. [URL in comments]

These are of course real effects, and it is truly meaningful for the people affected, but it is not the civilizational collapse that fills your LinkedIn feed with breathless commentary from people who have never run an AI system in a production environment and would not know a latency issue from a loss function.

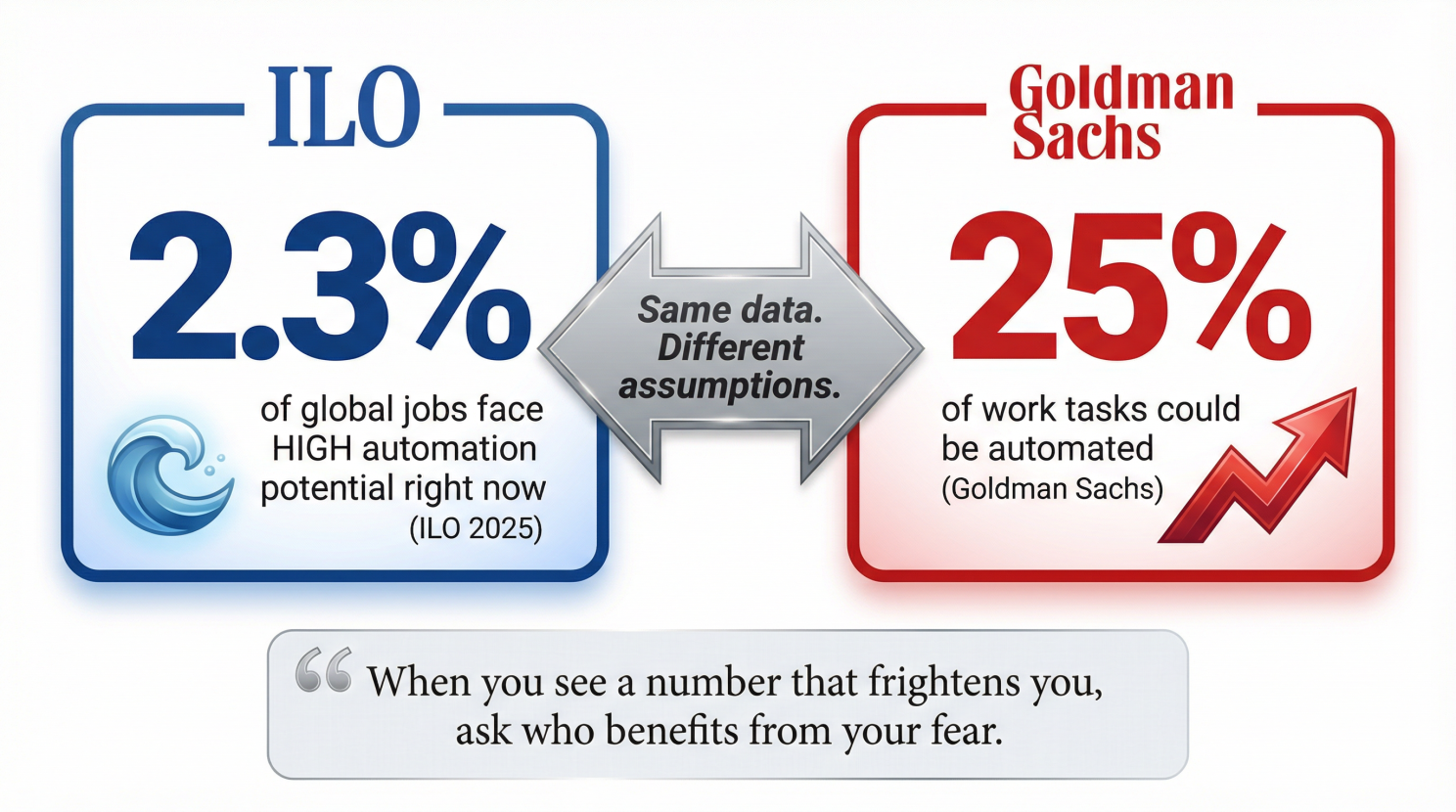

I want to be fair to myself here, which is difficult because I am also the person being mocked in this article which is that the research is genuinely uncertain. The Peterson Institute for International Economics, not exactly a hysterical institution, noted in March 2026 that research on AI and the labor market is “still in its first inning”. The World Economic Forum in all it’s ‘wisdom’ projected 83 million jobs eliminated by 2025 alongside 69 million created, which is a net loss of 14 million, but that was under a five-year multi-technology scenario and this prediction has not materialized on schedule. Goldman Sachs said that 300 million FTE jobs are globally exposed, but that is an upper-bound exposure estimate, and certainly not a central prediction, and those two things are not the same, and the fact that every newsletter treated them as the same is a journalistic failure, yet it is so persistent it has become almost admirable in its naive consistency.

The honest summary is – and let me quote it again – that “AI is systematically attacking entry-level cognitive roles”.

And the thing is that this is corroborated by my work as an AI automation factory builder, where my primary role is to automate work through agentic process-automation on a very, very large scale, and my conclusion there is that AI in its current form is perfect for automating high volume, low risk processes, but when it’s a compliance rich process, or a risky process, or one that has to be auditable, then there’s a lower chance you’ll find AI displacing jobs there.

So yeah, basically, if your job consists of collecting data from emails and putting it in an excel sheet, running some macro’s and cut-and-pasting the results into a powerpoint deck, well yeah, you have to fear for your job. And this is the current state of affairs, but don’t rest on your laurels yet, because this will change as well. Job displacement is real, but it is gradual, and the people screaming loudest about it are, almost without exception, people who have a financial interest in your fear.

Speaking of which…

Dario Amodei is not your friend (and neither is his valuation)

Dario Amodei is the CEO of Anthropic, the maker of Claude and this man stood up in 2025 and told the world that AI could eliminate 10 to 20 percent of unemployment within one to five years, and some versions of this statement got rendered as “50% of white collar jobs gone”, which is what happens when nuance meets a content machine that rewards alarm.

His statement was captured in this video:

The AI CitizenAnthropic CEO Warns: AI Could Eliminate 50% of White-Collar …

And

60 MinutesAI could erase half of all entry-level white-collar jobs, CE…

I want to explain why this is, to use a technical term, bullshit. Not the long-term concern part, the long-term concern is legitimate, but I’m referring to the one-to-five-year timeframe part.

Let us do a little math together, the way a teacher would when they want you to understand rather than simply memorize. The empirical evidence from three and a half years of actual, real-world AI deployment shows approximately 100,000 to 150,000 high-confidence AI-attributed job displacements globally (as of April ‘26). Amodei’s 10-20% unemployment scenario in the U.S. alone would require something in the range of 15 to 30 million jobs eliminated, but we are currently running at roughly 40,000 to 50,000 per year globally, with acceleration nowhere near the curve that gets you to tens of millions in the United States within five years.

The math just does not add up my friend, the trajectory does not support the timeline, and to add insult to injury, the empirical evidence from 2022 to 2026 is inconsistent with the pace of adoption needed to produce that outcome.

Now. Why would an intelligent, technically sophisticated person say something that the data does not support?

I am not going to say he is lying, because I am not a politician and I do not use that word lightly, but I will say this “Anthropic’s valuation depends, in a very direct and measurable way, on the market believing that AI is about to transform everything at an extraordinary pace” and when Dario says the economy is about to be turned upside down by AI, he is simultaneously making a statement about the product he is selling.

Fear drives investment and urgency drives adoption. He is playing directly into the greed of the corporate world. A CEO who says “AI will have a moderate, gradual, sector-specific impact over the next decade” does not raise the money that a CEO who says “10-20% unemployment within five years” does. You get my drift?

I am automating jobs professionally, and even I am telling you to calm the heck down, which should tell you something about the gap between the narrative and the engineering reality.

In my own experience, this is explicitly done by Big Tech, and it is a misleading narrative, Amodei’s 2025 prediction “represents the extreme end of the displacement spectrum” and is “inconsistent with the pace of actual adoption and labor market adjustment observed to date”. I said it. . .

The International Labor Organization – a UN agency that sets international labor standards and researches work and employment globally – created this thing called the ILO Generative AI and Jobs Refined Global Index, which they published in 2025. It is their attempt to measure how exposed different jobs are to automation by generative AI specifically (not AI in general, and not robots), occupation by occupation and country by country. In their study, they found that only 2.3% of global jobs face high automation potential right now.

But on the opposite end, there’s Goldman Sachs saying that up to a quarter of work could be automated. Those two numbers cannot both be right at the same time, and the spread between them reflects not better or worse data but fundamentally different assumptions about what “automatable” means and how fast real adoption happens.

The thing I want you to remember as a takeaway is this, and let me put quotes around it again . . .

“When you see a number that frightens you, ask who benefits from your fear. It is not always sinister. Sometimes smart people genuinely believe their own projections, but it is almost always worth asking”.

AI cannot actually do your job yet – a hard ceiling at 35%

Here is where I get to use my own experience. And that is both the most useful and the most uncomfortable part of writing about this topic. I run an AI process-automation operation for a European Big Tech company (no that’s not an oxymoron), and this is not a hobby or a side project or a think piece. I really build agentic AI systems that automate real business processes for real companies, and I do it at scale, and I do it every day, and I have formed some very specific opinions about what AI can and cannot do in a production environment.

But when I started out running this process automation factory, I needed a benchmark. I needed to know what targets I could set for our ‘tour de force’. So, I set out to do some good old academic research.

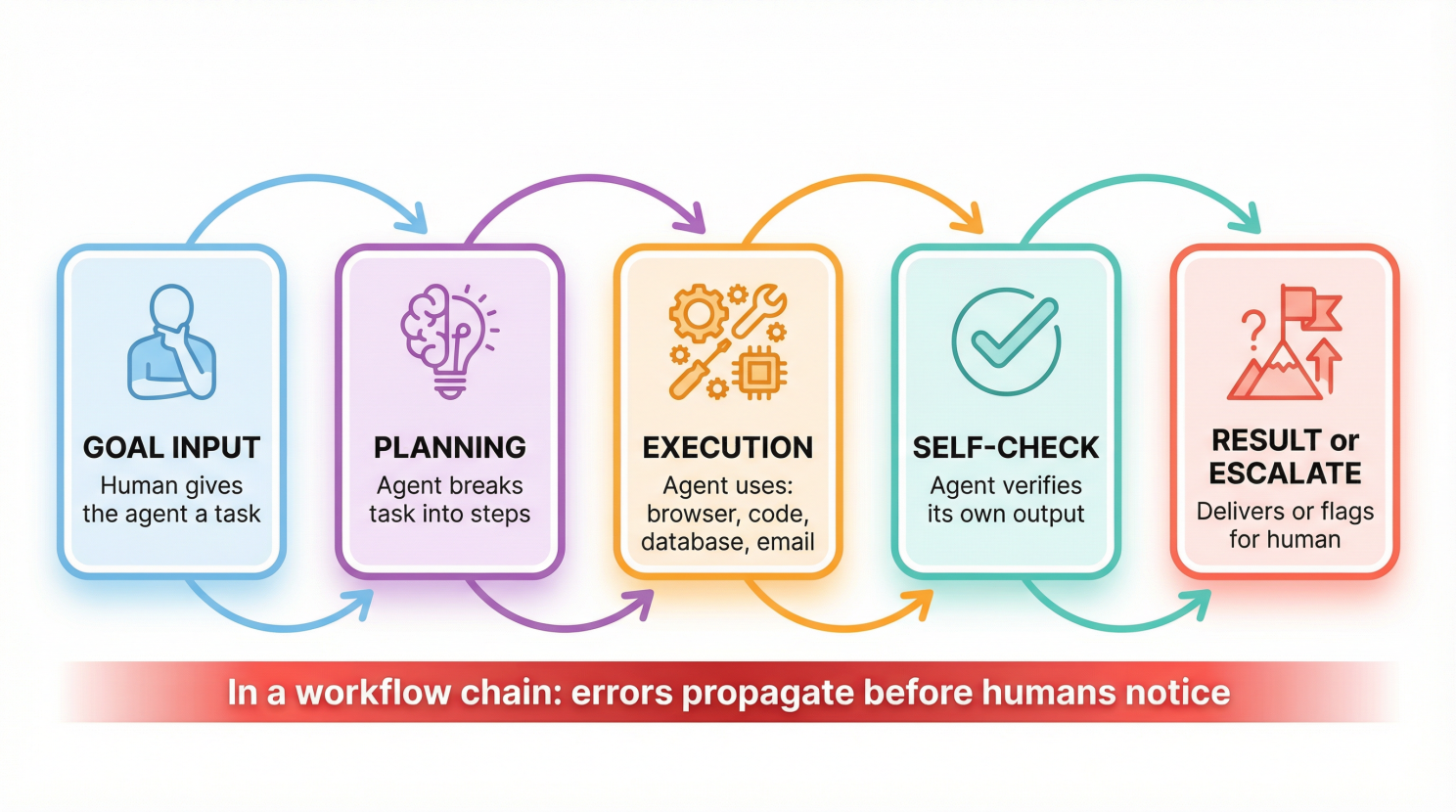

But before I get into that, let me first explain what “agentic AI” means, because if I do not, the rest of this section will be gibberish. When I’m talking about an AI agent, I’m not talking about just a chatbot that answers questions. You ask a bot something and then it responds and the interaction ends. But an agent is different. An agent takes a goal, thinks it through, breaks it into steps and then executes those steps using tools like web browsers, code interpreters, databases, email systems, and other software, checks its own work, corrects errors, and keeps going until the task is done or until it spectacularly hallucinates and does something that makes you question your life choices. Agents are why people are excited, and agents are also why I have grey hairs I did not have two years ago.

And then there are autonomous agents that run in workflows…

…and this is genuinely interesting, and it is also the technology where I personally lose the most sleep over. A single agent that is performing a single task is manageable because you can watch it and you can catch it when it goes wrong. But a workflow is a chain of agents where each one handing its output to the next and making decisions that the next one treats as ground truth.

In an agentic workflow, you have an orchestrator which is a master agent that assigns tasks to specialist sub-agents and decides what happens next. The orchestrator does not do the work itself. it simply manages. Then the sub-agents handle the actual execution, like say, one searches the web, and the other writes the output, and yet another one checks it against a set of rules, and so on, and each one is narrow and fast and together they can complete in four minutes what used to take a human four hours.

When it works, it is genuinely astonishing, but when it doesn’t, the failure propagates through the entire chain before anyone notices, and by the time a human sees the output, the damage is already three steps downstream. I have seen it happen on multiple occasions, especially when upscaling the factory, and yes, the grey hairs are load-bearing. That is why we now have an agentic process-operator, that is one of the new type of roles that are coming to the marketplace, that I’ll be discussing down below.

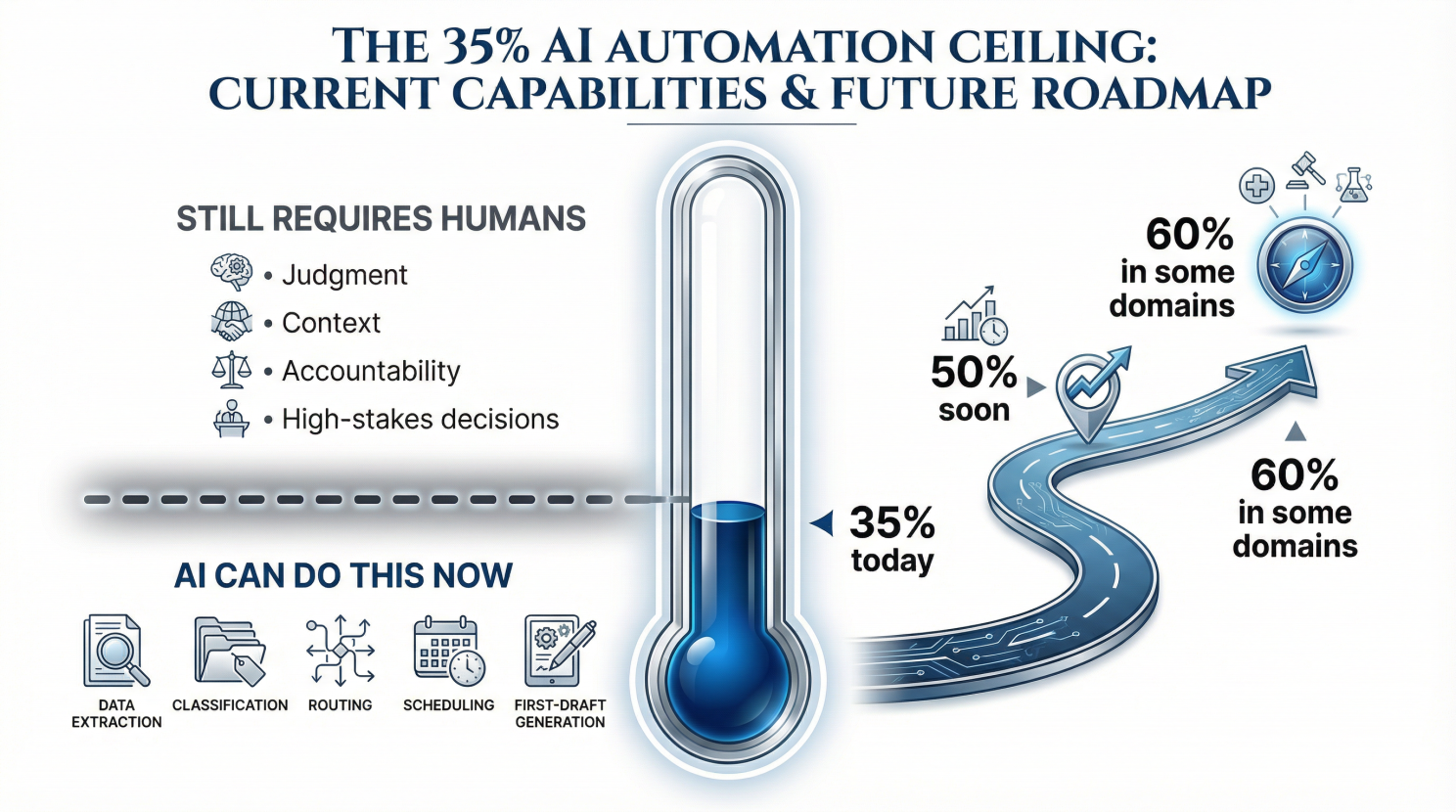

Anyway, based on my own operational experience and the research I have done, I believe that current AI systems – and yes, also the best agentic setups – they face what I call a 35% agentification ceiling. What that means, in plain language, is that you can take any given business process, map all the tasks within it, and you will find that AI can reliably automate somewhere around a third of those tasks, sometimes a bit more in very structured environments. The rest either require judgment that models do not yet have, involve exceptions that are too unpredictable, or carry consequences too severe if the model gets it wrong.

AI is genuinely good at high-volume, low-complexity tasks where the input is clean and the output is supposed to be structured, and being wrong occasionally is acceptable.

I’m talking about tasks involving data extraction, classification, first-draft generation, scheduled reporting, routing, tagging. They can do this quite fast and consistent, but when you introduce context that lives outside the document, judgment calls that require understanding of what a client relationship actually means or what a regulatory risk actually implies, or any situation where a mistake has legal or financial consequences that a company cannot absorb – well, then you hit that ceiling hard. The model keeps going with the same confidence regardless, and that is the problem. A human who does not know something usually signals uncertainty but an AI that does not know something usually makes something up with exactly the same tone as when it does know.

But don’t get your hopes up too hight because the ceiling will rise.

I am personally building systems that target higher-complexity domains – I wrote about it in the blog the boring AI that keeps planes in the sky – but this is a multi-year engineering challenge, so the models are getting better and the tooling is maturing and yes, the architectures for auditable AI decision-making are being developed right now, but “right now” in engineering time means a few years before those systems are in production at meaningful scale. And no, certainly not the breathless “within months” framing you get from product announcements written by marketing teams whose bonuses depend on the valuation of their company.

So yes, change is coming, my smart friend, but it will be more gradual than the fear merchants tell you. Use the time to get yourself a new pair of skills, I say.

The net positive that nobody talks about because it doesn’t sell newsletters

Let me start this part with an uncomfortable counterpoint to everything I just told you.

The fact that AI IS creating jobs.

And the funny thing is that it’s actually more jobs, in raw numbers, than it is currently destroying, at least in the specific window we can measure with any confidence. The central estimates from the research I did tells us that somewhere between 75,000 and 120,000 net new specialized AI roles were created globally between November 2022 and April 2026, against a central displacement estimate of 100,000 to 150,000. That is close to net neutral in aggregate. It’s trending marginally negative, but with enormous uncertainty on both sides of the ledger.

The net positive I am referring to is not the aggregate number. The aggregate is uncertain and it’s close enough to zero that arguing about it feels academic. The net positive I mean is the type of job being created, and what it signals about where the economy is going. Because the new jobs that are being created by AI are not the same as the old jobs being destroyed. They are different in a way that matters enormously for how you should be thinking about your career right now.

Here is the teacher-explaining-it-to-a-smart-class version . . .

The jobs being killed are the jobs at the bottom of the white-collar ladder. Junior content writers, entry-level customer service agents, routine coders doing repetitive refactoring, you get the picture. These are the jobs that used to serve as the first rung on the career ladder. AI is eating those roles, and the National Bureau of Economic Research, in their “Working Paper No. 33690”, described what is happening as “still waters, rapid currents”. The surface statistics look calm, but the structural dynamics underneath are moving very fast. The ADP Research Institute reported that young workers aged 18 to 24 in highly AI-exposed occupations are experiencing concentrated employment declines but in contrary, the older workers in the same firms see gains.

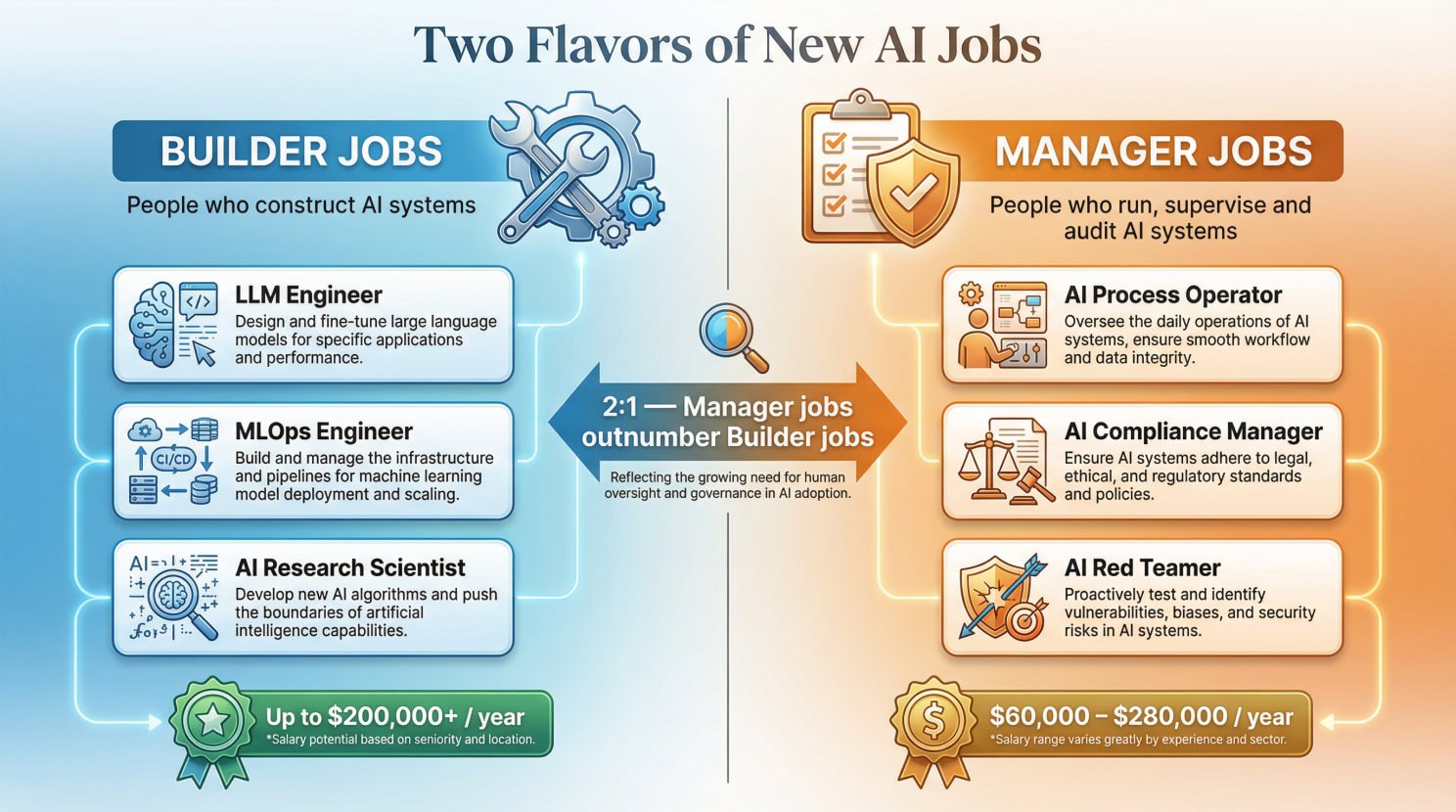

The jobs being created, on the other hand, pay more and come in two distinct flavors.

The first flavor is the Builder jobs – I’m talking about the people who construct the AI systems that are going to keep transforming the economy – and the second flavor is the Manager jobs – the people who run, supervise, audit, correct, and take responsibility for the AI systems that the Builders built. I am going to spend the rest of this article on both, with most of the attention on the second, because it is the second category that is genuinely new and genuinely strange and genuinely where the opportunity is for people who are not going to become machine learning engineers at age 40.

The people who build the machine that builds your replacement

I want to spend a bit of time on the Builder jobs because they are important and they are why my former students who went into machine learning are currently buying apartments while I am writing sarcastic articles at a Saturday afternoon. But I also want to be honest about who these jobs are actually for, because a lot of career advice in the AI space is essentially telling a person who wants to become a slightly better cook that they should get a PhD in food chemistry.

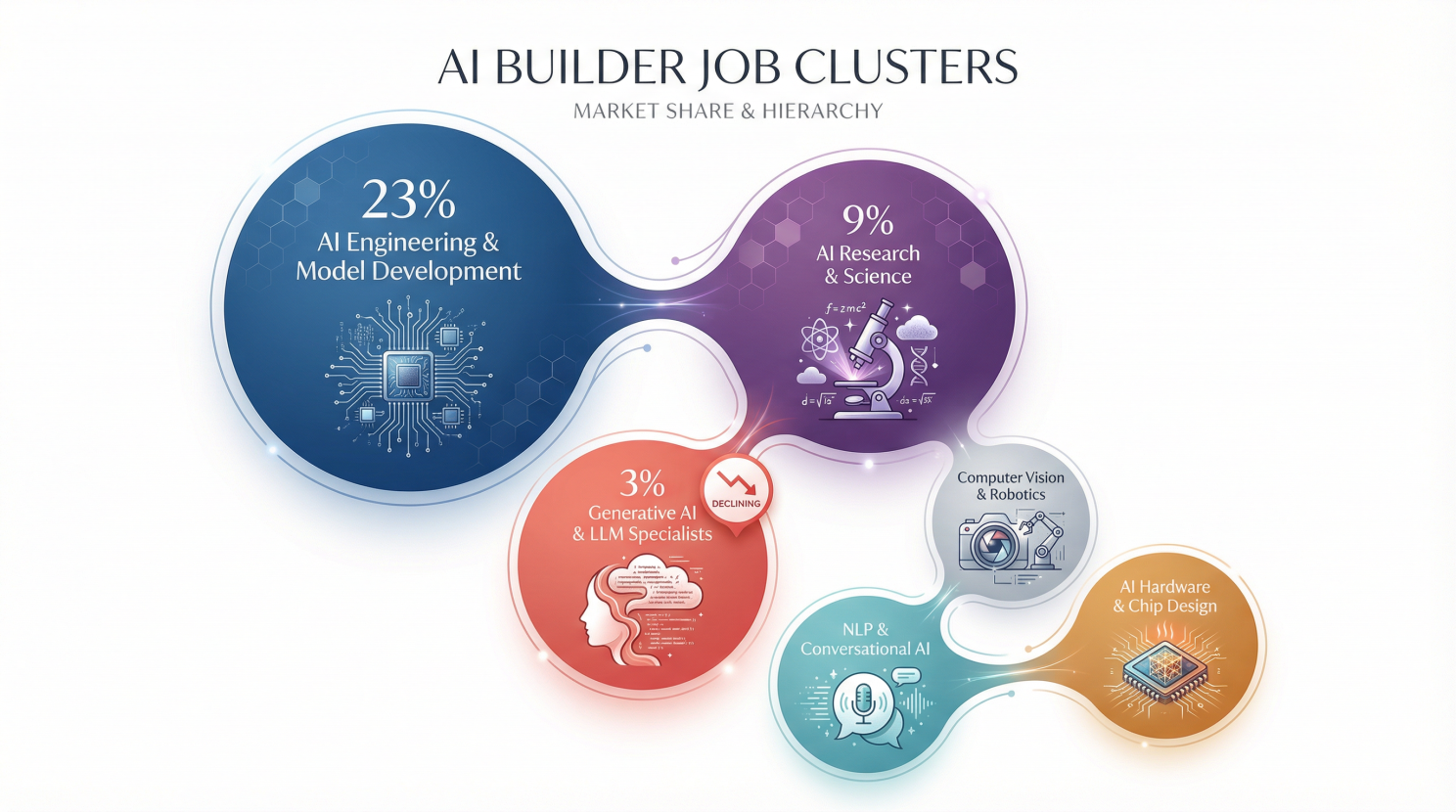

The Builder jobs fall into six clusters. Let me take you through them clearly, because most job-market writing about this uses terminology so casually that it ends up explaining nothing.

Let’s start with the AI Engineering and Model Development people These are the MLOps Engineers, the LLMOps Engineers, AI Infrastructure Engineers, etc. They are the people who actually train models, yank them in production, and fix them when they break. A note on MLOps, because it deserves one, “MLOps” stands for Machine Learning Operations, and it means the same thing to AI systems that DevOps means to regular software, it is the discipline of taking a thing that works in a lab and making it work reliably in the real world at scale, which is always harder than the person who built it thinks it will be. This cluster accounts for 23% of all unique AI-specific roles in our sample, making it the second largest category after AI Interaction and UX Design, and the salaries range from reasonable to obscene, with LLM Engineers in the United States comfortably clearing $200,000 a year for someone who genuinely knows what they are doing.

The other cluster I could find in the data is the AI Research and Science people, which sits above Engineering on the intellectual prestige hierarchy and pays even more in exchange for requiring a certain level of mathematical fluency. Neuro-Symbolic AI Researcher, for instance, is a real title. It means someone who works at the intersection of neural networks (the pattern-recognition systems that power most modern AI) and symbolic reasoning (the rule-based, logic-driven approach to AI that was dominant before deep learning). Simply put, one approach learns patterns from data and is very good at determining is something “looks like a cat”, and the other approach uses explicit rules and is very good at logic like “if X then Y, therefore Z.” Combining them is an active research frontier and a job that requires a PhD and the kind of patience with mathematics that I deeply respect and could never personally sustain. This cluster is just 9% of our sample, which tells you everything you need to know about how many people can actually do it.

The third cluster is Generative AI and LLM Specialists – with the Prompt Engineers, the Foundation Model Engineers, the people who specialize in the actual large language models that have been dominating news cycles since late 2022. It is already one of the smallest clusters at 3%, and it is shrinking. I need to be honest about Prompt Engineering in particular, because I have been honest about everything else, that this job category is already showing signs of decline. The research I did identifies it explicitly as an “overstated creation claim”. It’s actually a job that was created in the “early days” of generative AI by early model limitations that is being eroded by the same models getting better at interpreting natural language. If you are currently studying to become a Prompt Engineer and nothing else, please stop what you’re doing and read the rest of this article.

There’s another cluster which is about Computer Vision and Robotics and here we find the Perception Engineers, Autonomous Driving Engineers, 3D Vision Specialists – the people who teach machines to understand the physical world through cameras and sensors. It is important work and it is growing but it’s largely irrelevant to the career conversation I want to have with the majority of the people reading this. It is less than 1% of the sample, and that tells you enough.

The fifth and sixth clusters are NLP and Conversational AI, and AI Hardware and Chip Design. This is where you find the Arabic NLP Engineers (a specific and genuinely fascinating growth area in the UAE, where the task of teaching AI to handle a language with multiple dialects, right-to-left script, and underrepresented internet training data is technically demandinge) and the engineers who are designing the silicon that runs it all (the NPU Architects and Neuromorphic Engineers), and work on hardware that processes AI workloads more efficiently than the general-purpose chips everything was running on before. Together these two clusters is a small slice of the market.

If you have the technical background for these jobs, you should be pursuing them with focused urgency. If you do not, they are not for you, and pretending otherwise is a waste of your limited time on this planet. But the good news for you is that the second category of AI jobs is both larger in absolute numbers and considerably more accessible to people who did not spend their twenties doing linear algebra.

The people who babysit the machine that is replacing you

It doesn’t seem so, but this is where I spent most of my research time and, if I am being brutally honest, is the real story of the AI labor market that is being written.

I want to tell you what I actually did to get to those percentages and jobs.

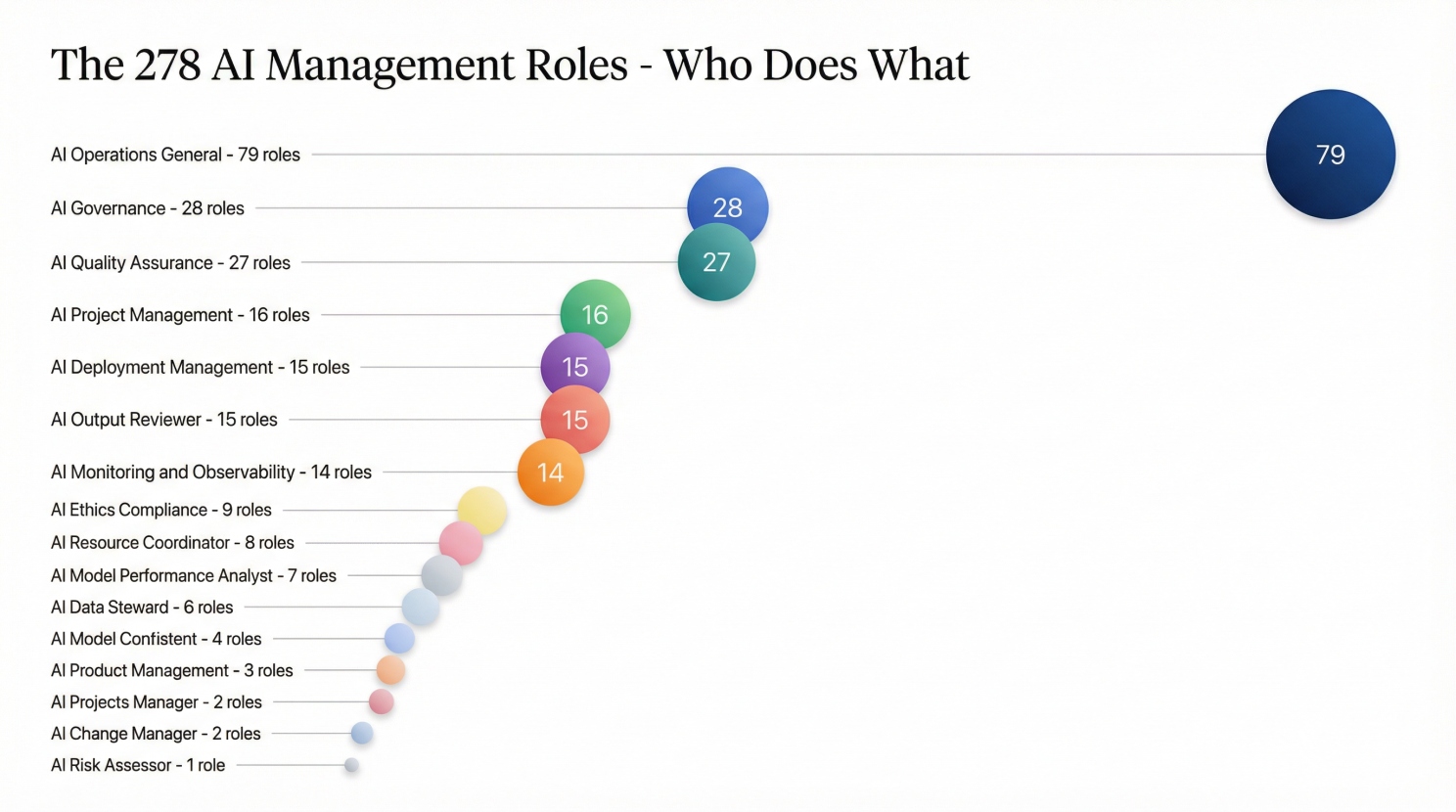

I got a bot swarm to scrape job listings from LinkedIn, Indeed, Glassdoor, Naukrigulf, Bayt, Zhaopin, Boss Zhipin, ZipRecruiter, and a bunch of other platforms, and I focused specifically on roles that exist not to build AI but to manage and supervise these agentic systems and ultimately the ones who take organizational responsibility for AI systems that someone else built. What I found was that nearly twice as many unique job listings exist for managing AI as exist for building it!

Let me say that again with appropriate emphasis . . .

“The majority of new AI-specific roles in our research sample are not about creating the technology, they are about keeping it from destroying things once it has been created”.

Let that sit for a moment. I think it’s philosophically rich.

The technology that is supposedly going to render human labor obsolete has generated a sprawling new industry of human labor that is specifically dedicated to keeping it from going off the rails. Yes, the machine needs a keeper, or a poop scooper, whatever you call it, and a lot of them, apparently, and they pay quite well as I know for a fact.

These roles fall into subcategories that reveal the full anatomy of what it actually takes to run AI at enterprise scale.

There are AI Change Management roles, and yes, this is a real job title. “AI Change Manager” at companies like Marsh McLennan have discovered that deploying AI without managing the human adaptation to it is how you get a system that technically works and organizationally fails. There are also AI Compliance roles, AI Audit roles (Audit – AI and Data Analytics Specialist at JCW Group in New York, substantial but undisclosed compensation), AI Red Teamers whose entire job is to spend the working day trying to break AI systems by finding the inputs that make them behave badly.

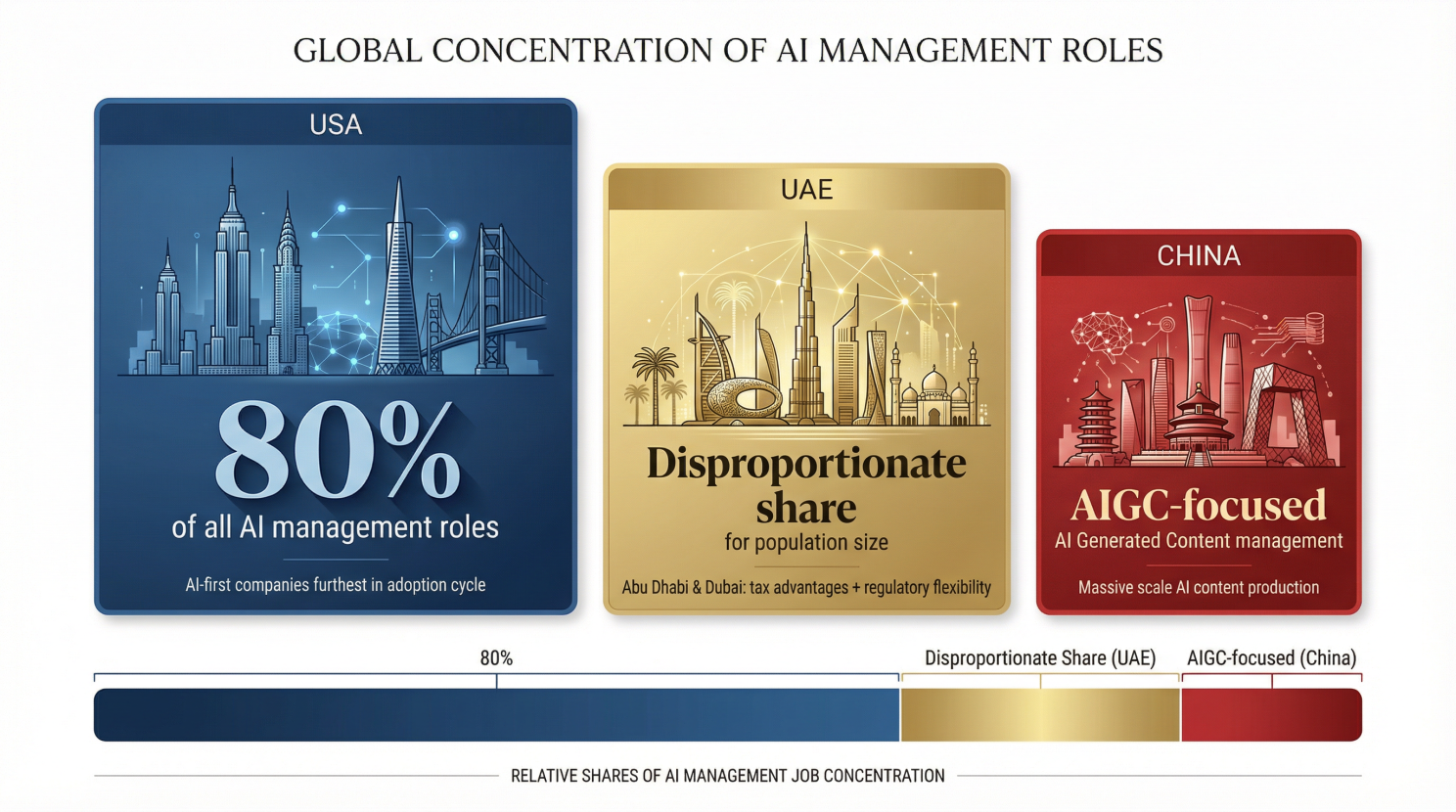

The United States dominates overwhelmingly. Roughly 80% of all AI management roles in the sample were of American origin, and that is because the concentration of AI-first companies in the US is the highest and that US companies are further along in the adoption cycle, having reached the stage where they need to govern what they have already deployed. Then not unsurprisingly, te UAE accounts for a disproportionate share given its population and economic size, with Abu Dhabi and Dubai actively recruiting for AI Operations roles with a specific flavor that you can only find in oil rich states that are using tax advantages and regulatory flexibility to attract the companies building AI and the professionals managing it. Then there’s China’s contribution that is heavily weighted toward AIGC (AI Generated Content) management roles. And that kind of makes sense given the scale of AI content production in Chinese digital media and the very absent regulatory environment around what AI-generated content is and is not permitted to contain.

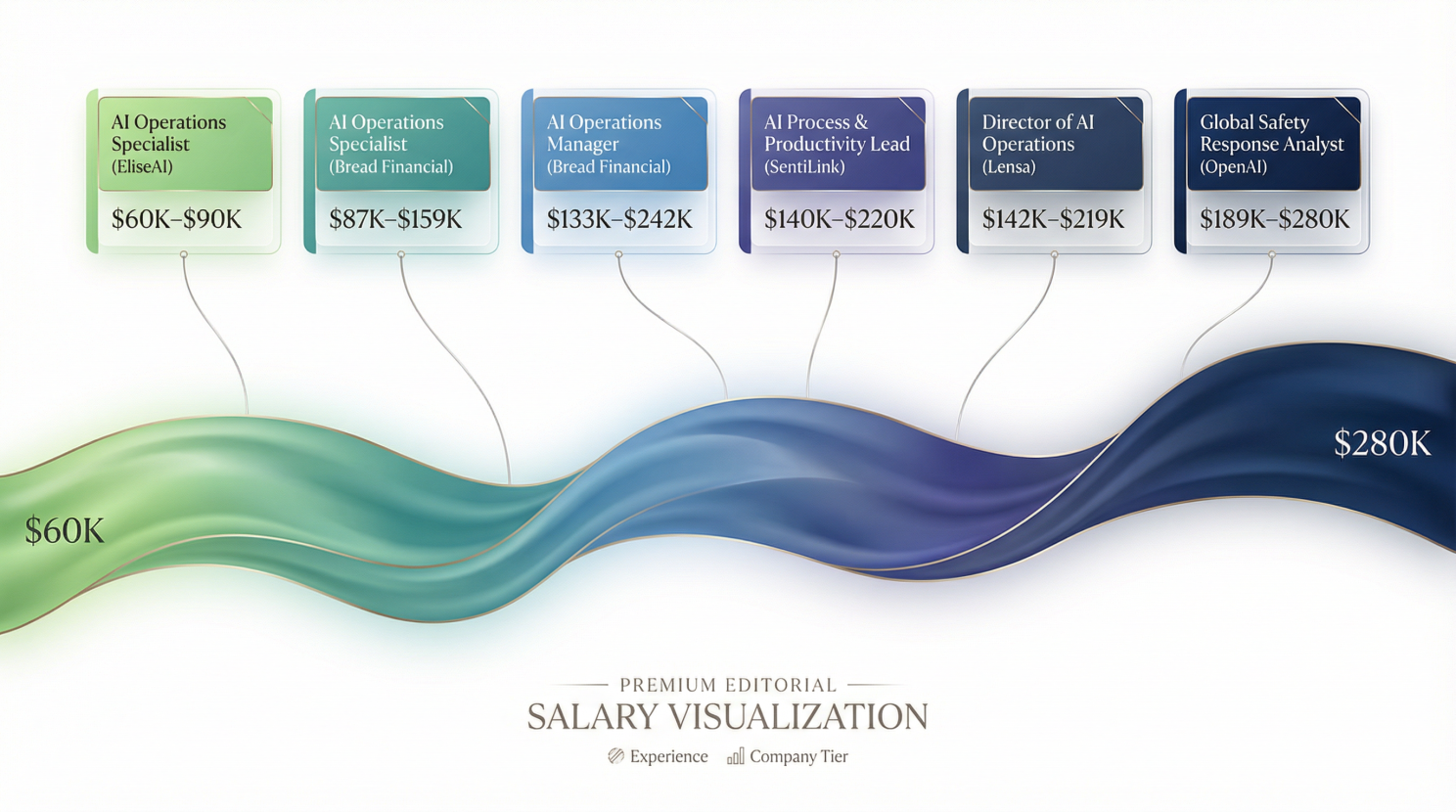

The salary range across these roles is genuinely broad in its spread. At the entry end you have AI Operations Specialists at companies like EliseAI who are targeting new graduates at $60,000 to $90,000 a year. At the senior end you have a Member of Partnerships Staff, Physical AI at Runway AI in New York that is paying $215,000 to $275,000, an AI Operations Manager at Bread Financial paying $133,500 to $241,900, and an AI Process and Productivity Lead at SentiLink sitting at $140,000 to $220,000. The Director of AI Operations at Lensa dunks between $142,600 and $219,400 annually.

The taxonomy of jobs maps almost perfectly to the lifecycle of an AI deployment. . .

You need someone to design how AI will change your processes (AI Change Management), someone to deploy it (AI Implementation), someone to run it day-to-day (AI Operations), someone to make sure it is not breaking regulations (AI Compliance and Governance), someone to check that it is actually doing what it is supposed to do (AI Audit), someone to handle the edge cases and disasters that the model cannot handle alone (AI Incident Management and Safety Operations), and someone to make sure the whole system does not quietly develop the kind of biases that produce a discrimination lawsuit (AI Ethics).

Every single one of these roles exists because AI systems are not autonomous and they require a scaffold of human judgment and accountability that the industry is currently scrambling to staff.

The AI Process Operator

I am currently hiring an AI Process Operator. I want to tell you why I think it is one of the most important new job categories to emerge from this entire transition, because it is the role that I believe points most directly toward where a large portion of AI-adjacent employment is going.

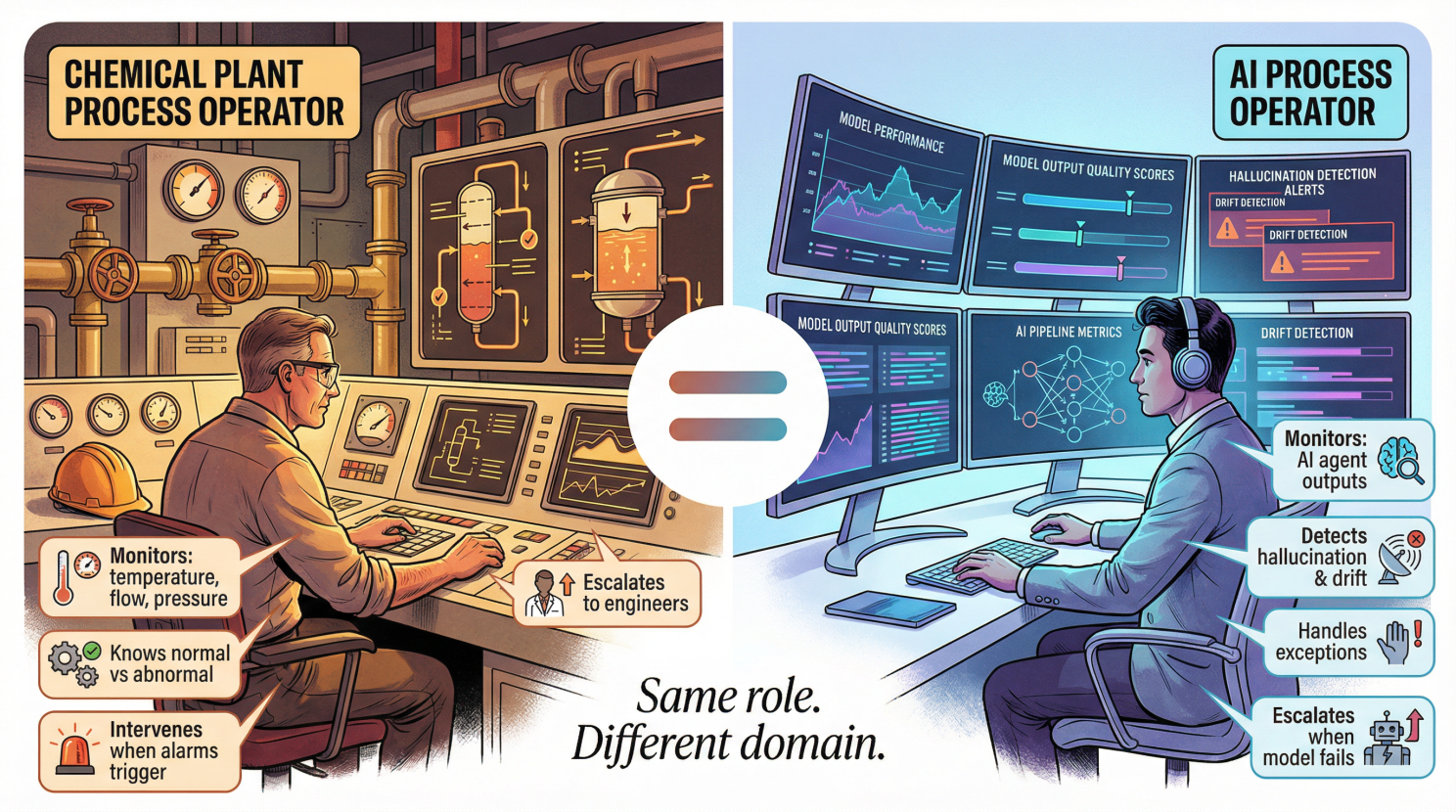

Let me start with an analogy, because it is a teacher’s best tool and I am not too proud to use one. I was trained to become a Chemical Engineer in the petrochemical industry – oil refineries, chemical plants, large-scale industrial facilities – and in that world there is a role called a Process Operator.

A Process Operator does not design the chemical plant and they did not engineer the reactor vessels or design the distillation columns or calculate the heat exchanger specifications, but what they do is run the plant, they sit in a control room surrounded by monitors displaying factory variables (temp, flow, pressure etc) and also alarm states across hundreds of interdependent systems. They know what normal looks like and what abnormal looks like and what the difference is between an alarm that is informational and an alarm that means stop everything right now looks like. And when something goes wrong they intervene, they adjust some parameters or start flaring gas, or they escalate to engineers when the problem exceeds their authority to fix.

An AI Process Operator is kinda like that role, but applied to an AI automation pipeline. I run AI agents that execute real business processes and those agents are handling tasks that used to require human judgment who were making determinations that have downstream consequences. When those agents work correctly, they are faster than humans, and more consistent than us humans, especially at 3am in the morning, without a staffing crisis. But when they do not work correctly, the consequences range from a minor bug to something that requires a very uncomfortable phone call with a client.

The AI Process Operator watches the pipeline of agents doing their thing.

They monitor outputs for signs that the model has started to hallucinate, drift, or produce outputs that are actually wrong in a way that a non-expert eye might miss. They handle exceptions and they maintain the documentation that tells the AI what to do and update it when the AI’s behavior reveals that the documentation was imprecise and ultimately, they take responsibility for the outcomes of the process they are running.

This role requires domain knowledge (what does good output look like in this specific process?), analytical judgment (is this output wrong or just unexpected?), process discipline (am I following the protocol or improvising in ways that will be invisible until they create a problem?), and a level of attention that does not erode over a six-hour shift because the job is boring enough that attention is the most demanding thing it asks of you. And I’m telling you that last one is underrated.

The fact that Bread Financial is paying $87,900 to $159,200 for an AI Operations Specialist, that OpenAI is paying $189,000 to $280,000 for a Global Safety Response Operations Analyst, that Rain is paying $147,700 to $200,000 for an AI Operations Lead, well, they reflect the market’s discovery that AI systems without human operators are not industrial-strength.

Winter is coming, but you have some time so use it.

I actually want to close with some of the conclusions for a change

- The data says that the net impact of AI on jobs right now is close to zero in aggregate and deeply uneven in distribution.

- The jobs being destroyed are concentrated at the entry level of cognitive work.

- The jobs being created require more, pay more, and are further up the skill curve than the jobs they are offsetting.

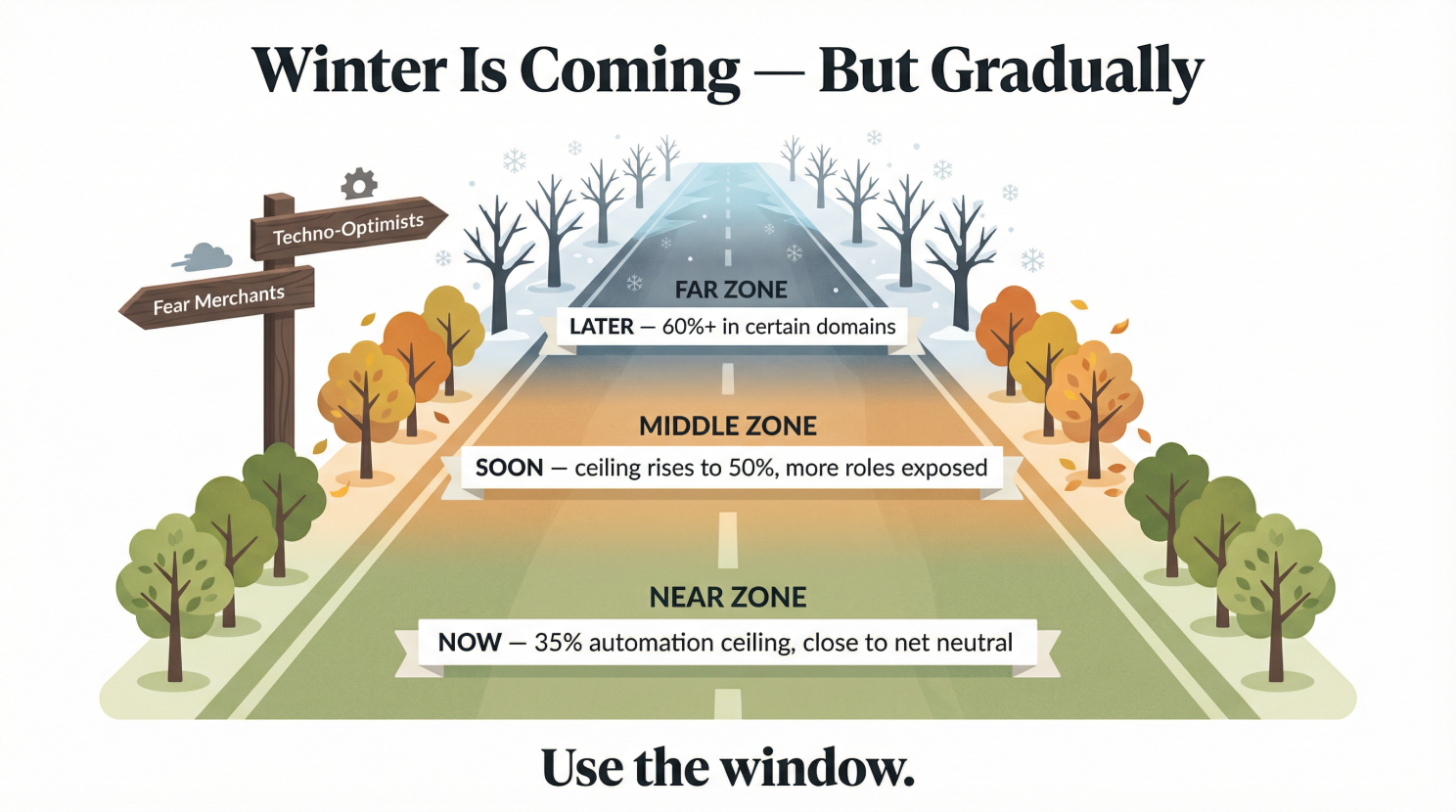

- The 35% ceiling on current AI automation is real, and it is based on engineering reality, but it is not permanent.

- The models will get better because the tooling for reliable AI in high-consequence environments is being built right now and I am one of the people building it, which gives me both confidence in the timeline and zero illusions about how hard it is.

- When that ceiling rises, and it will rise, probably to 50%, probably to 60% in certain domains, over the next few years, the categories of work that are currently safe will become less safe, and the categories of work that are still hard for AI (will become more valuable.

- The fear mongerers want you to remain nervous because their valuation depends on it and the techno-optimists want you naively enthusiastic, but both serve an agenda that is not yours.

- And yes, winter is coming, but it will arrive gradually, and the sectors and jobs that are exposed first are identifiable, but also the jobs that are being created by this transition are real and accessible and pay well.

It is like every automation wave we’ve seen before.

This research on job categories is based on 432+ AI job listings on LinkedIn, Indeed, Glassdoor, Naukrigulf, Bayt.com, Zhaopin, Boss Zhipin, and ZipRecruiter across the USA, UAE, and China (2025-2026). Net employment data from a multi-source research study triangulated across twelve independent evidence domains. The AI taxonomy covers 22 categories and 278 specialized AI management and operations roles.

Signing off,

Marco

I build AI by day and warn about it by night. I call it job security. Big Tech keeps inflating its promises, and I just bring the pins and clean up the mess.

👉 Think a friend would enjoy this too? Share the newsletter and let them join the conversation. LinkedIn, Google and the AI engines appreciates your likes by making my articles available to more readers.

Leave a comment