We have been working in artificial intelligence for many years, long before every software pitch deck acquired an AI slide. Our experience was built when artificial intelligence was an engineering discipline, and success was measured by whether systems could function reliably inside real organizations under real operational pressure.

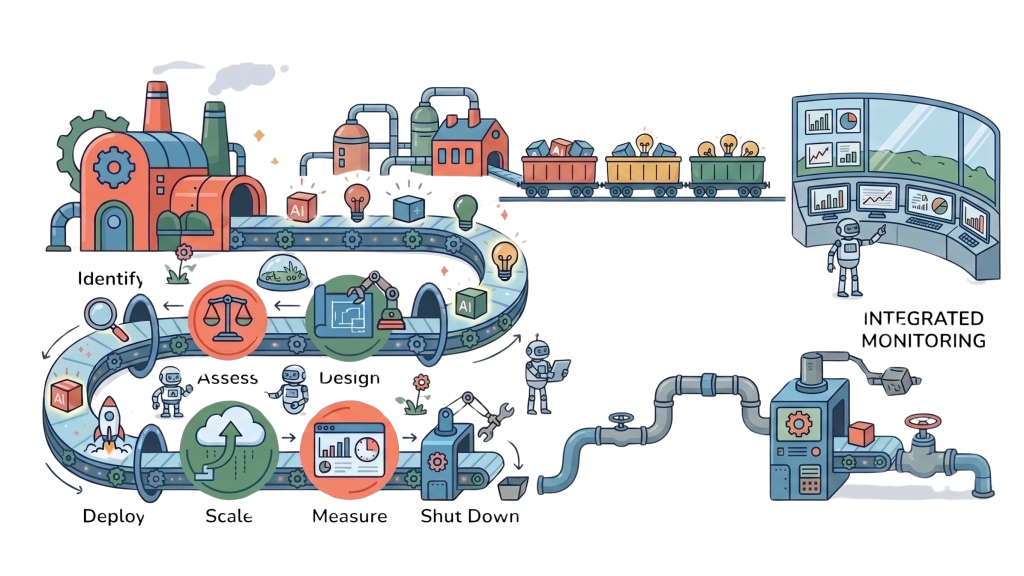

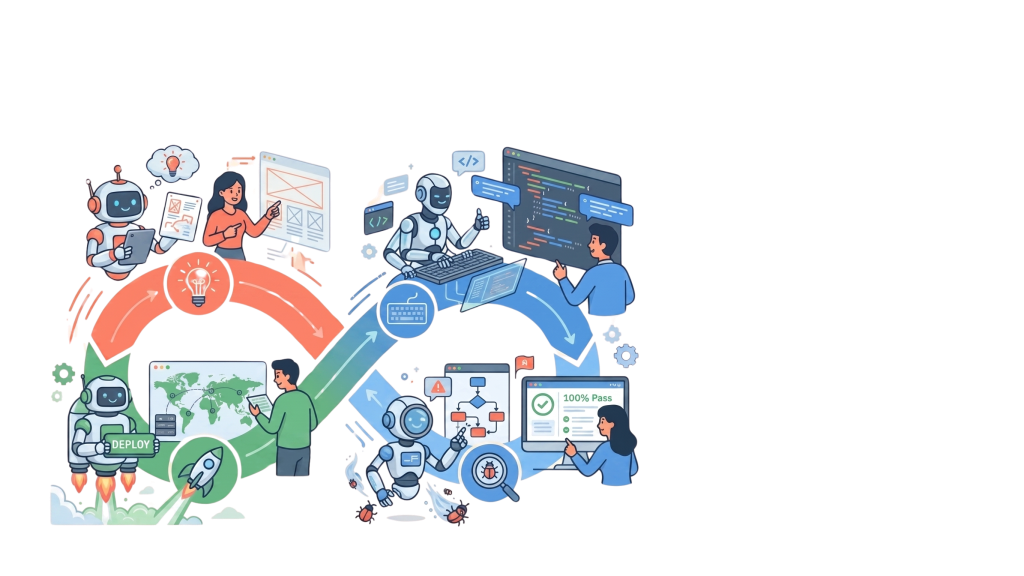

That history shaped how we think. And ever since, we treat AI as an enterprise capability that must be designed, governed, integrated, and scaled with discipline. The people within Eigenvector have spent decades working on large-scale transformation programs involving operations, software delivery, governance, process optimization, and organizational redesign. We understand that AI adoption rarely fails because of model quality but because of fragmented processes, weak ownership, unclear accountability, poor measurement, and the inability to turn experiments into repeatable operating capability.

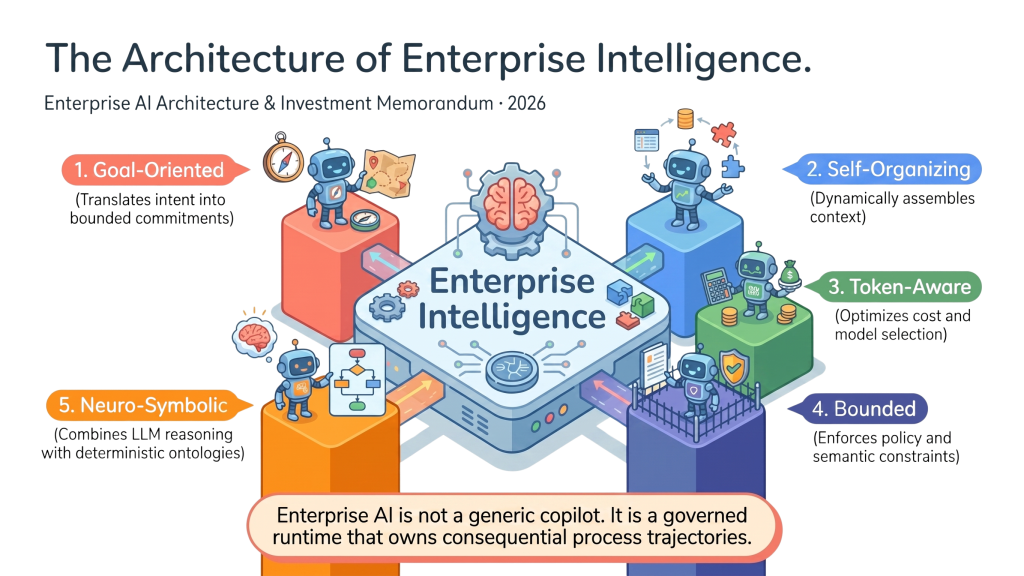

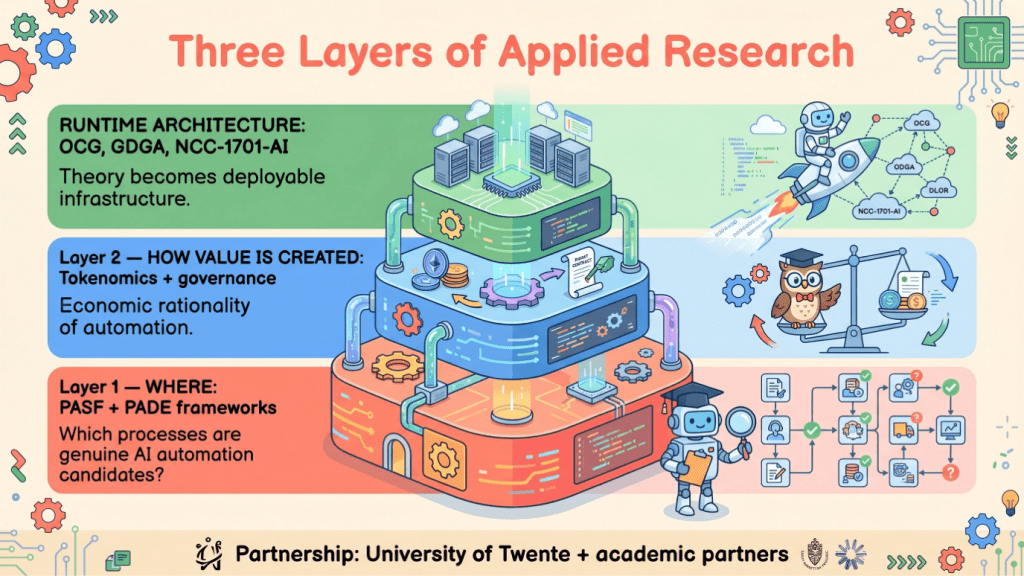

Our work is organized around two pillars. The first is process agentification, redesigning enterprise processes so that AI agents, workflows, human oversight, and control mechanisms operate as one coordinated system.

The second is AI-assisted software development lifecycles, where engineering itself is transformed through AI-enabled architecture, coding, testing, deployment, and continuous improvement. In both areas, we work from strategy through execution to measurement of business outcomes.

Frontier AI and Enterprise AI Are Not the Same Thing

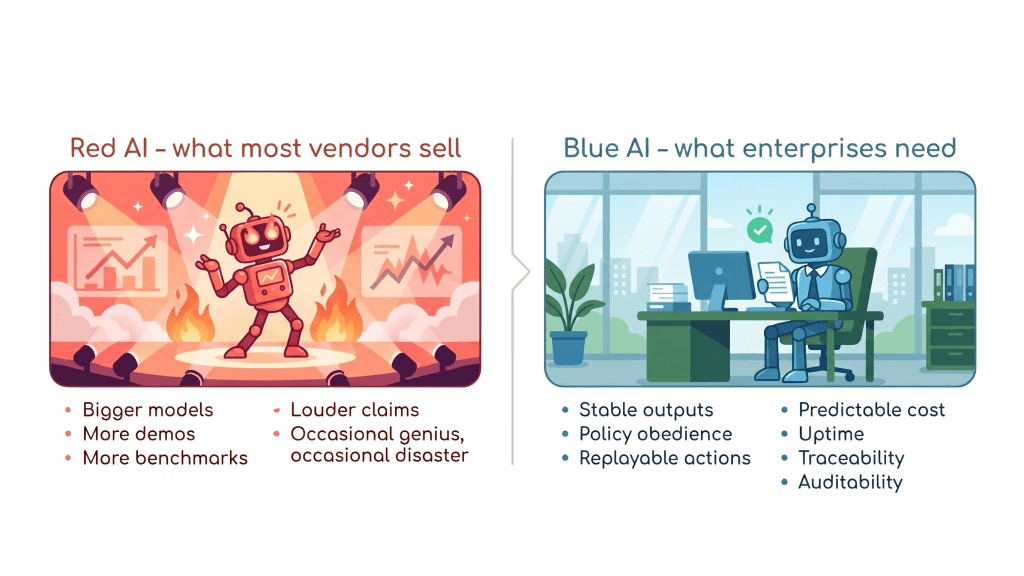

The frontier laboratories are building remarkable systems. Trillion-parameter architectures, hyperscale compute, world models, increasingly sophisticated reasoning. We respect that work. And it will continue to shape the future of computing.

But frontier AI and enterprise AI solve different problems. Frontier systems are optimized for raw capability and benchmark performance whereas enterprises need AI that can be planned, predicted, audited, governed, and economically justified. They need systems that function consistently in regulated environments, within established workflows, and under conditions where mistakes carry financial, legal, or reputational consequences.

That is why we use the term Blue AI. It is our name for enterprise-grade intelligence engineered for reliability, accountability, and measurable value creation. AI designed not to impress, but to perform.

The NCC-1701-AI Research Program

To advance this vision, we launched our own research initiative, the NCC-1701-AI program, also known as the Blue AI Program. Its purpose is to develop an AI system that is capable of operating safely and effectively in the parts of the enterprise where current approaches are least trustworthy and where the greatest value is at stake.

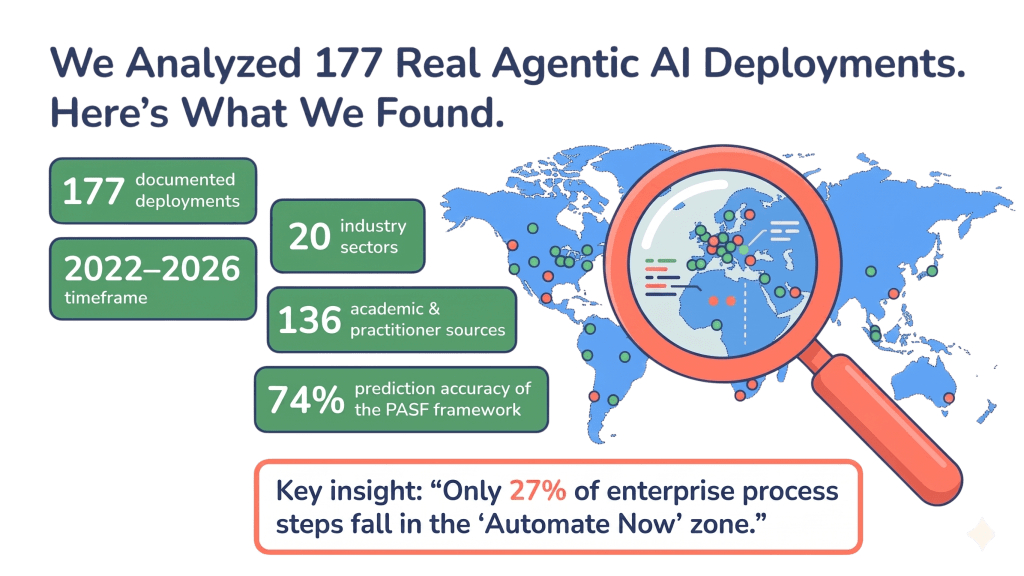

The program began with a detailed analysis of 177 agentic automation projects across multiple domains. The findings were consistent. Current AI performs well in narrow task environments, but degrades sharply when introduced into complex operational processes involving exceptions, dependencies, controls, and cross-functional accountability.

This led us to develop the Process Zone Model.

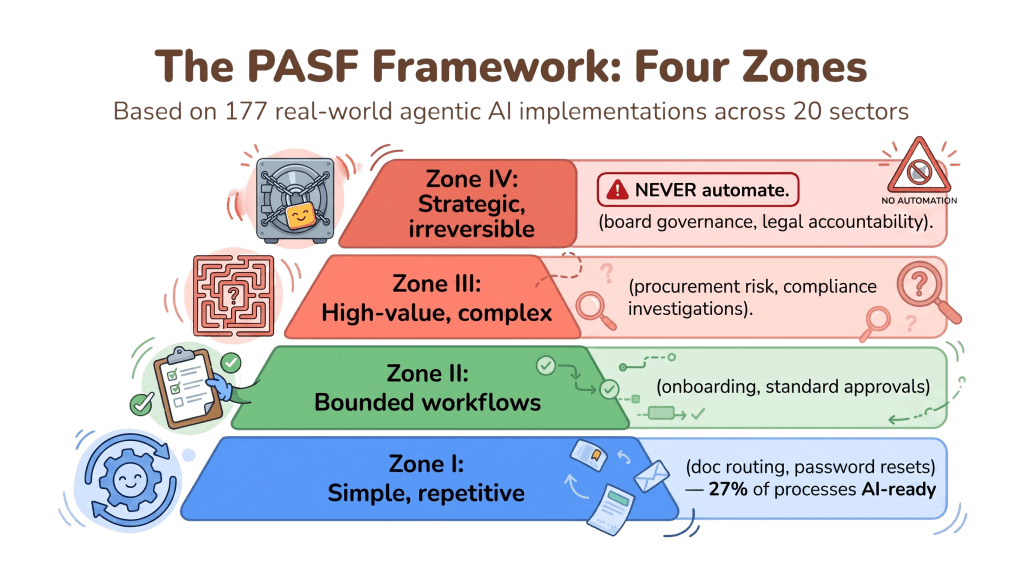

The Four Process Zones

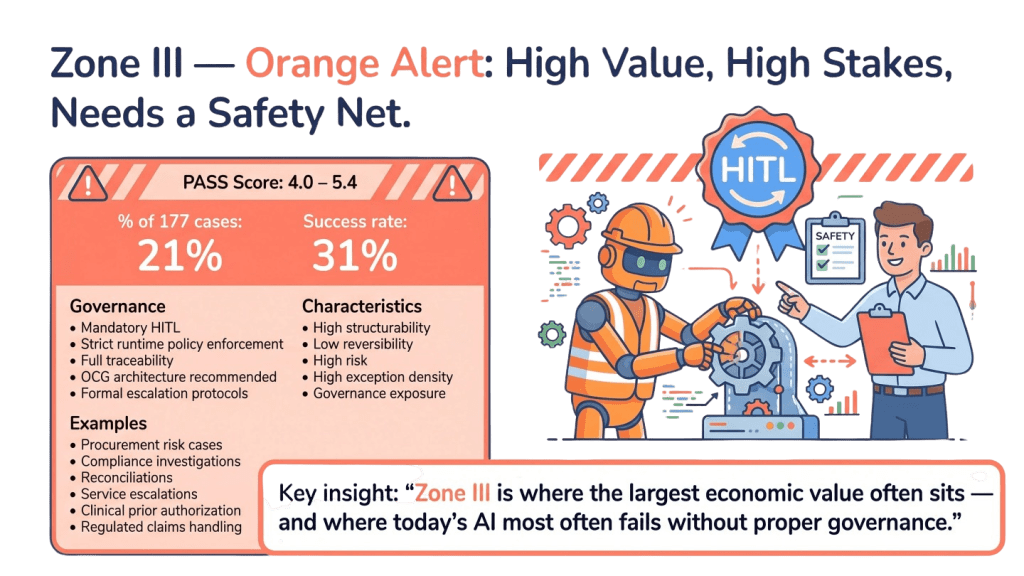

We classify enterprise work into four zones. Zone I covers isolated, low-risk tasks with limited consequences: summarization, drafting, retrieval, categorization. Current AI handles this well. Zone II includes structured workflows with clear decision points and human supervision, such as service operations, intake flows, and document handling. Current AI can perform here with the right orchestration. Zone III is where most strategic enterprise value lives: complex, exception-heavy, compliance-sensitive, cross-functional processes including finance operations, legal approvals, healthcare pathways, procurement controls, and mission-critical service delivery. These processes are dynamic, interconnected, and sensitive to error.

Zone III is also where most current AI becomes unreliable.

Zone IV covers fully autonomous, self-optimizing operations where AI systems coordinate, adapt, and govern themselves across the enterprise without continuous human intervention. No current AI system operates reliably at this level. It is the frontier we are building toward.

The Agentification Ceiling

Based on our research, current mainstream AI architectures face what we call the agentification ceiling, which today sits at approximately 35 percent. Only a minority of enterprise processes can be safely automated using current task-oriented AI systems.

The reason is structural. Most models are trained to perform tasks, not to understand processes. They do not naturally reason across long operational chains, conflicting constraints, governance obligations, and downstream consequences.

Deploying unconstrained AI into Zone III operations means introducing probabilistic behavior into deterministic environments. Errors may be infrequent, but when they occur they are expensive, invisible, and difficult to trace.

Neurosymbolic AI and the Ontological Compliance Gateway

The objective of the Blue AI Program is to build AI capable of operating inside Zone III with trustworthiness and control. To achieve this, our systems combine modern machine learning with symbolic reasoning. Neural models are strong at pattern recognition, language understanding, and probabilistic inference. Symbolic systems are strong at logic, structure, constraints, and explicit reasoning. Neural systems generate possibilities. Symbolic systems govern what is permissible. Together, they create AI that is both capable and disciplined.

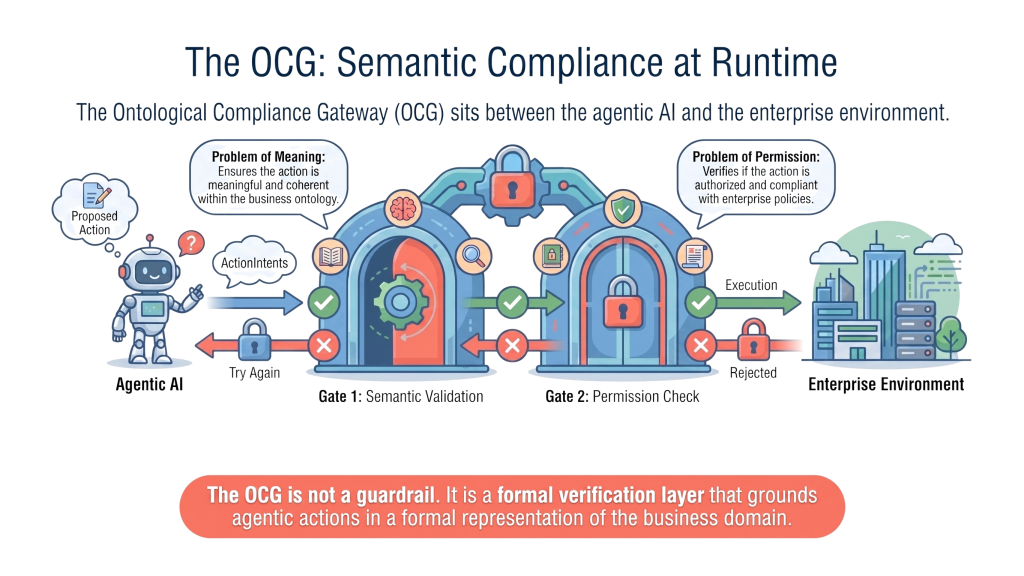

At the center of our architecture is the Ontological Compliance Gateway. The OCG acts as a control layer between model outputs and enterprise execution. A semantic gate interprets meaning, relationships, domain entities, and business intent. A rules gate applies formal constraints including compliance policies, approval hierarchies, thresholds, segregation of duties, and legal restrictions. Together, they ensure that AI decisions are not merely plausible, but contextually valid and operationally allowed.

Governance by Design

In most AI deployments, governance is an afterthought. Controls are added later, dashboards are built later, and auditability surfaces only after the first audit request. We reject that model. In Blue AI, governance is built directly into the runtime architecture. Traceability, explainability, control logic, and policy enforcement exist from day one. The result is a system with built-in discipline rather than one that requires constant correction after failure.

The next era of AI will not belong only to those who build the largest models. It will belong to those who build the most trusted systems.