At Eigenvector we research new focus on applying academic insights to business problems. For instance, we focus on building neuro-symbolic AI models that improve the percentage of enterprise processes that are elligible for automation, or Geometric AI that improves the resolution of UV beams of light for lithography systems, or we produce tools that allow for surgically targeting specific processes that offer quick wins for agentification. We build these systems in a transparent way, we’re also accountable for what we build, and we are sovereign by design.

Academic rigor is the foundation for every framework we design, from data governance to autonomous agents. Our research bridges theory and implementation and in it, we explore how architecture, control, and ethics converge into novel intelligent systems.

On this page we present four of our research papers:

- The PASF/PADE framework and supporting apps for selecting the right processes for agentification

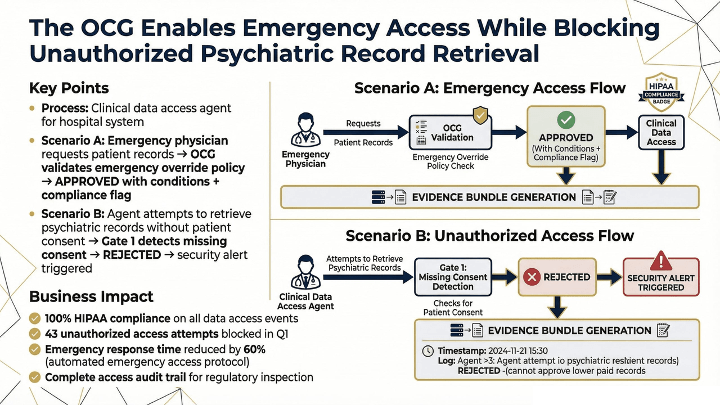

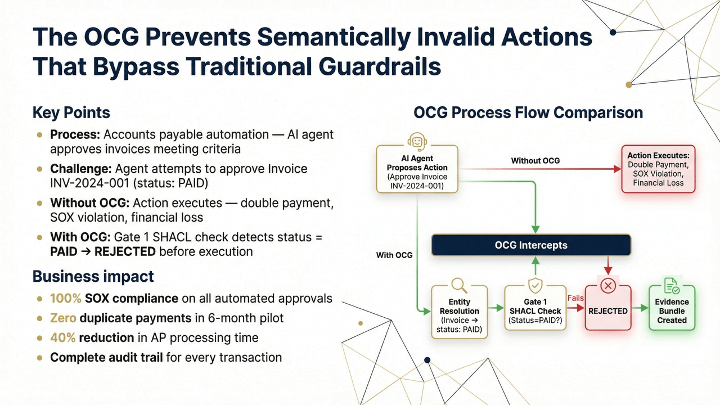

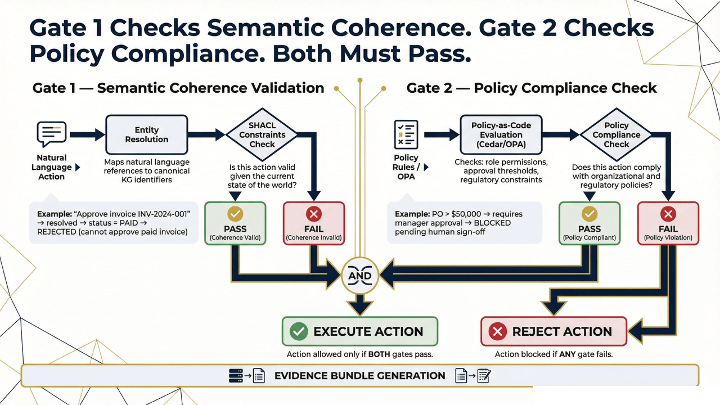

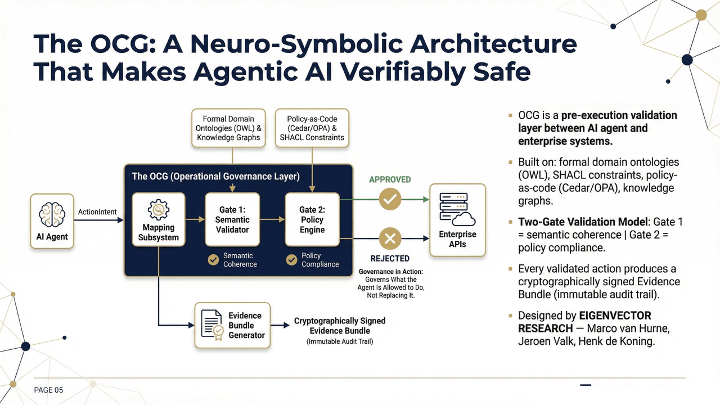

- The Ontological Compliance Gateway – a neuro-symbolic AI combining good ol’ rules engines with state of the art neural networks that allows for more processes to be agentified.

- TOKENOMICS – is a structured framework for managing token costs in agentic AI systems, combining a five-layer cost stack, dynamic budget allocation, and an efficiency benchmark to make AI deployment economically sustainable at scale.

- The AI Sovereignty Index (ASI), which focues on implementing a prioritization method based on risk to become less dependent on foreign models with their built-in biases and Big Tech.

- Economic Analysis of Sovereignty. This paper looks at the cost of becoming independent from Big Tech, and it includes the ASI for a more balanced approached to investing in AI.

THE PROCESS AUTOMATION FRAMEWORK

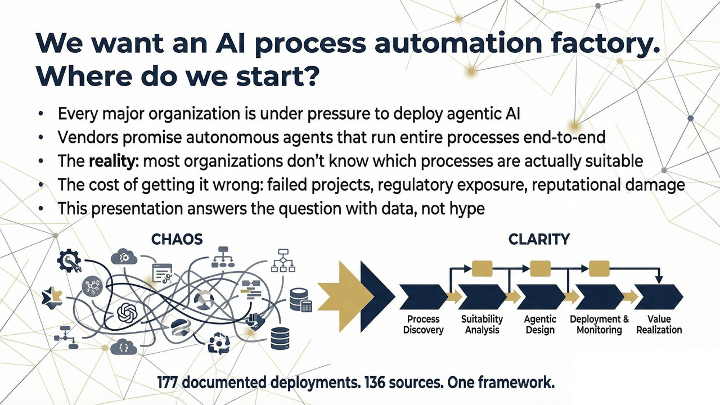

Most automation research tells you AI agents are coming for your processes. This one is about which of your processes deserve are ready for it and it designs them as well.

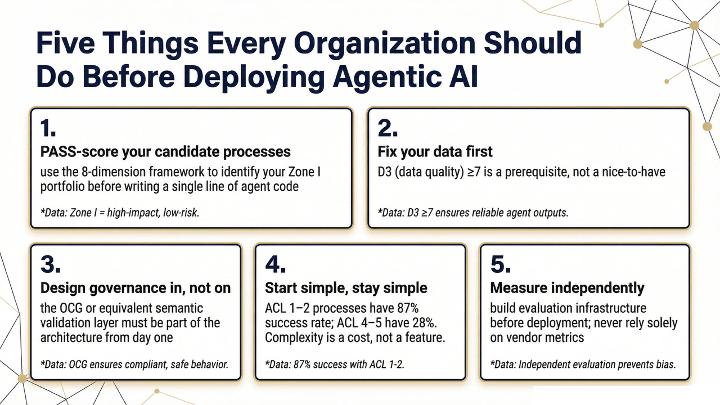

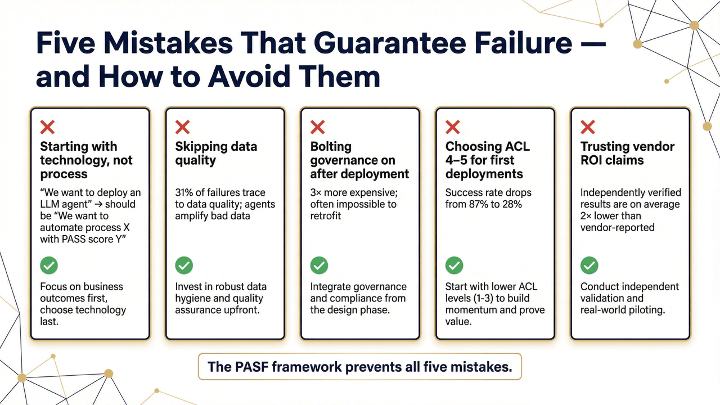

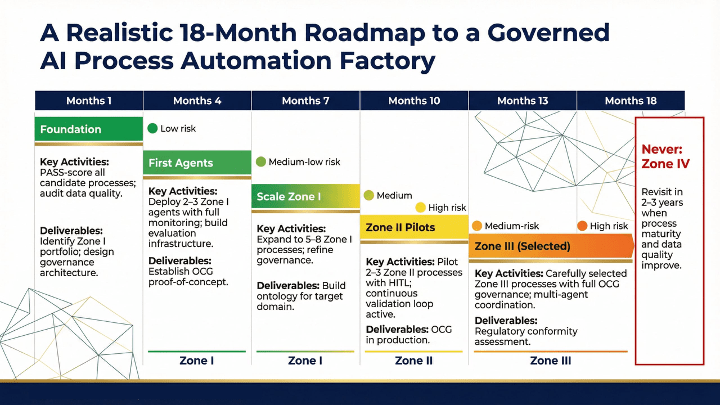

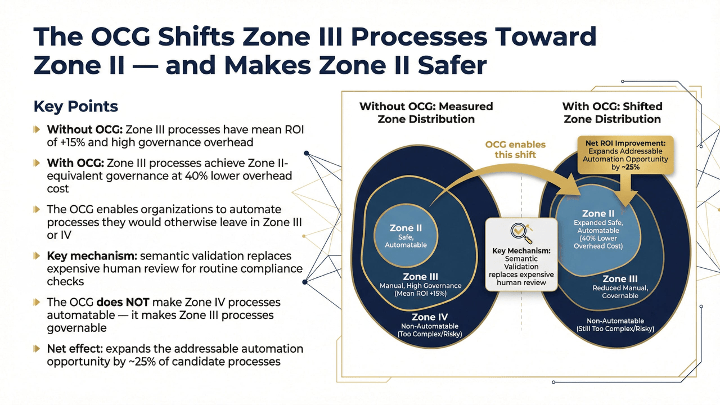

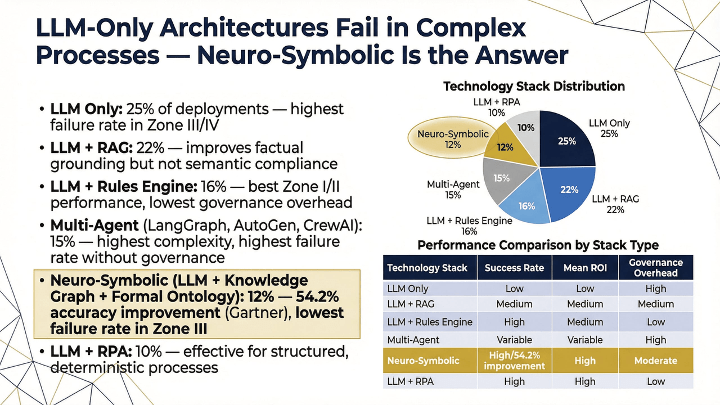

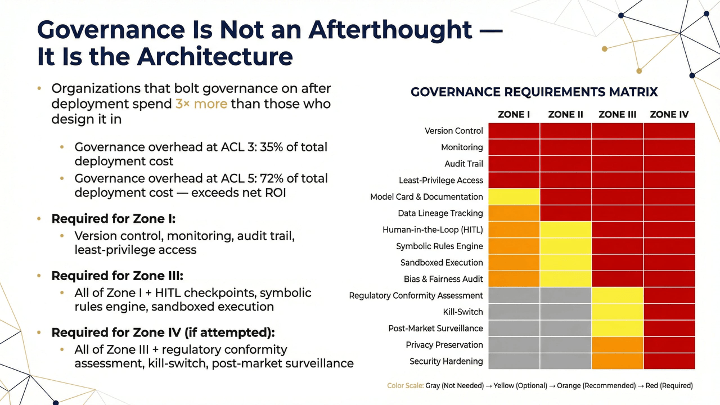

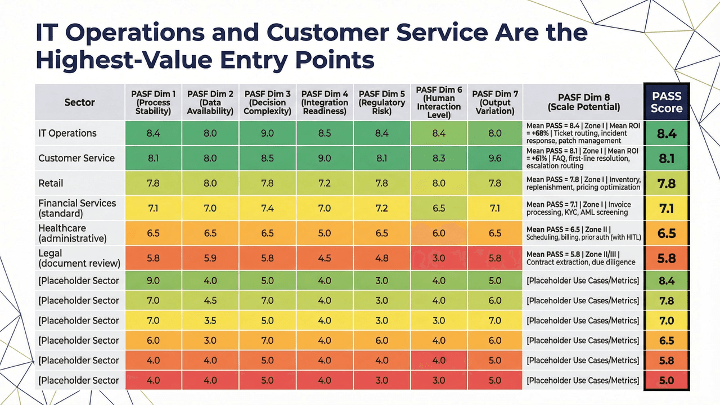

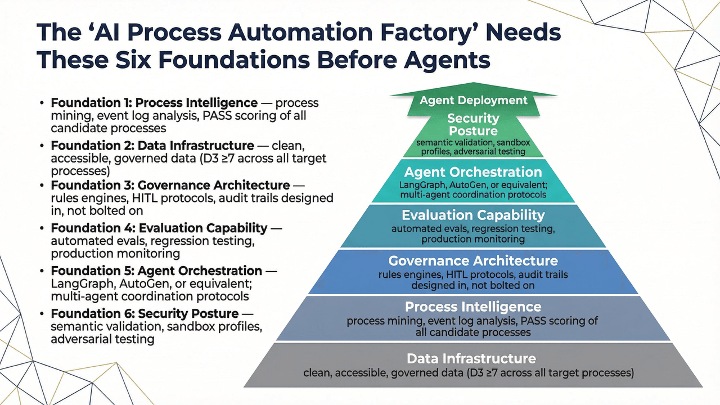

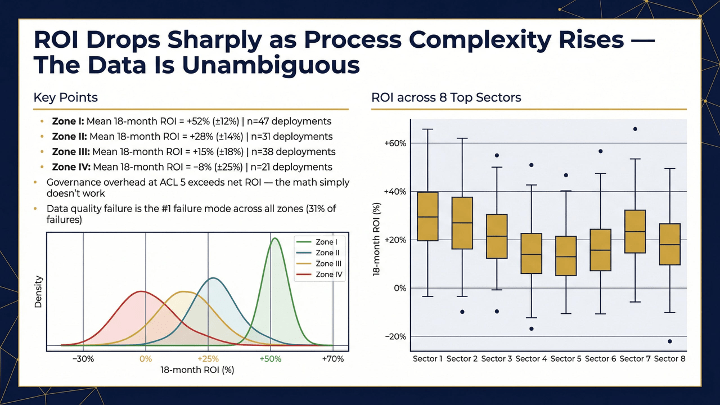

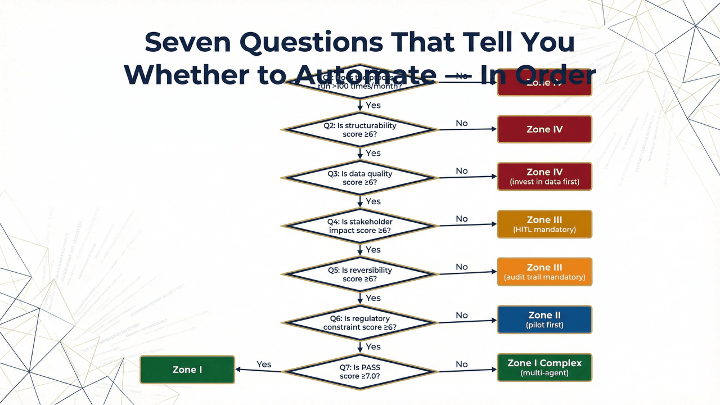

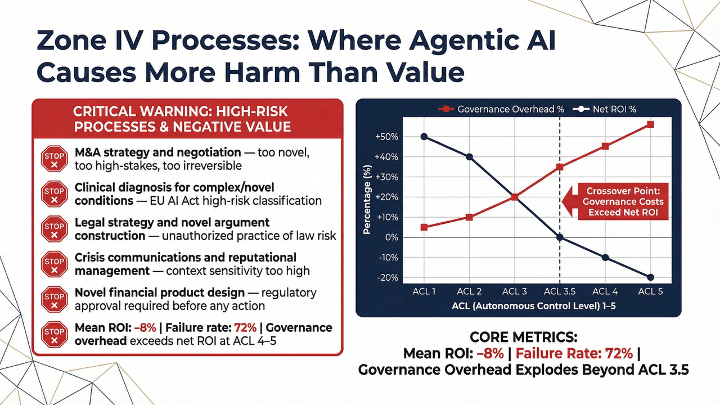

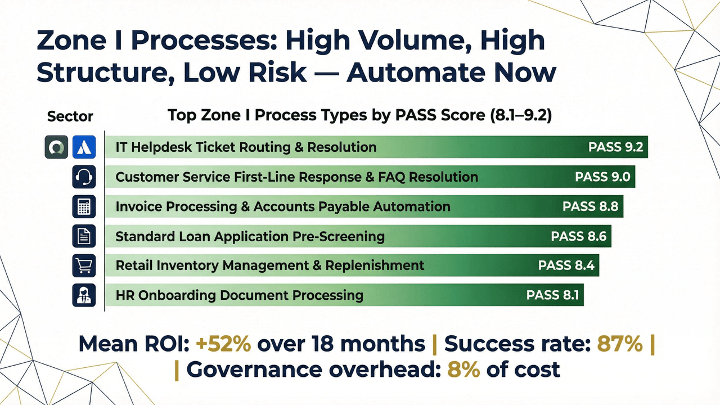

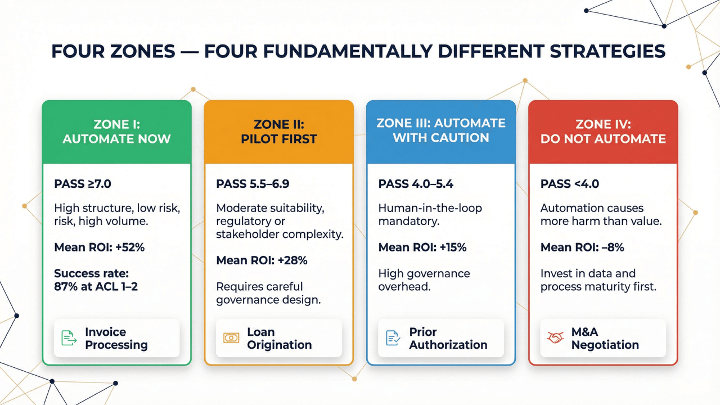

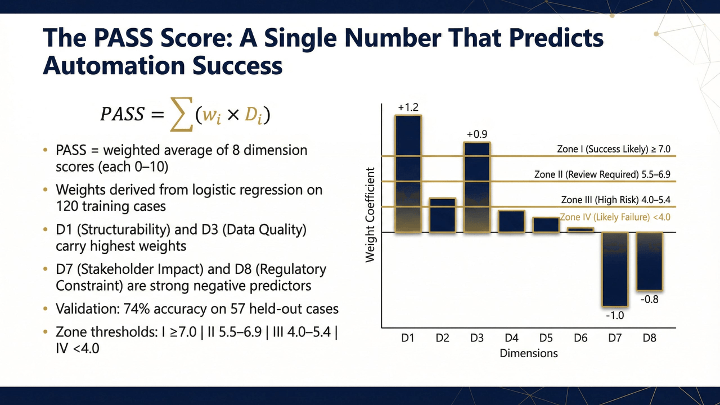

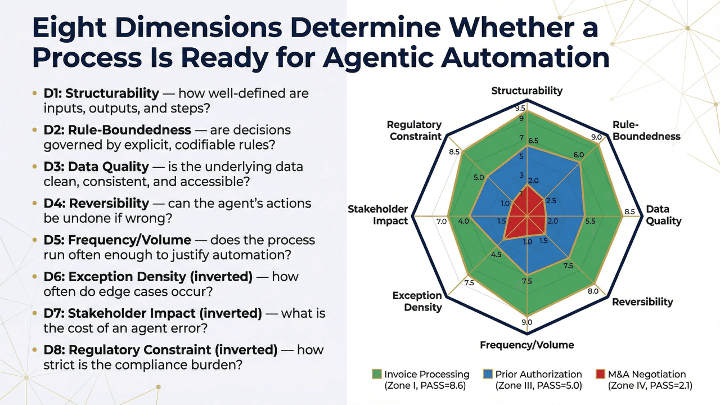

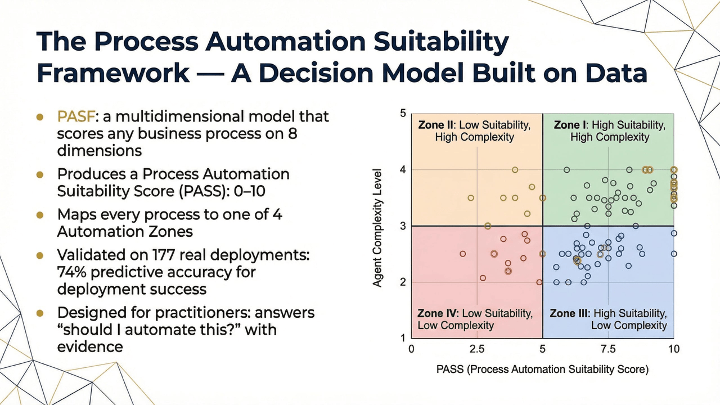

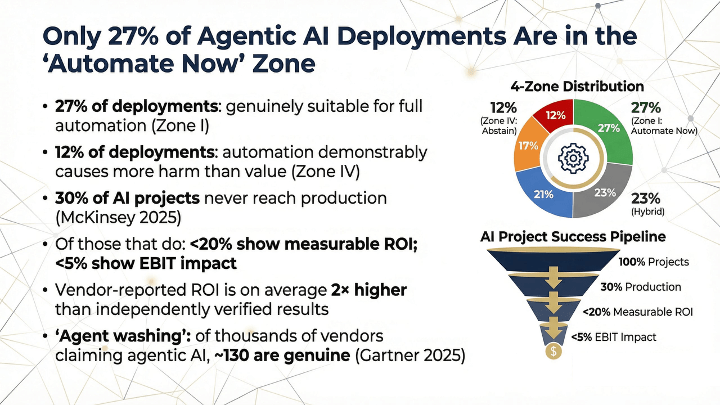

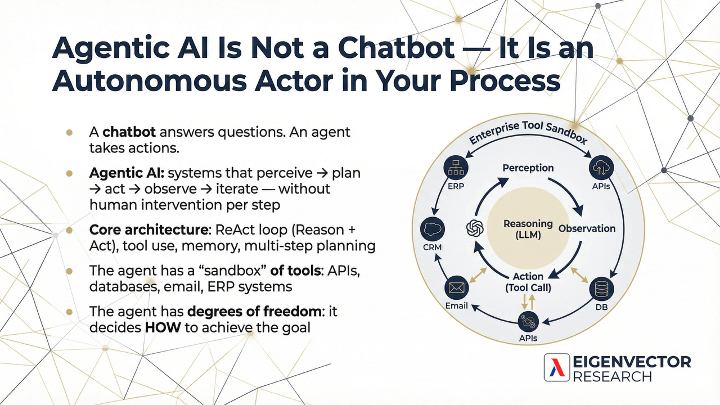

The PASF-PADE framework came out of a straightforward frustration, the gap between what vendors claim and what actually works in production. It combines two tools. The Process Automation Suitability Framework that evaluates any business process across eight dimensions and places it in one of four zones, from automate immediately to do not touch. The Process Automation Design Engine then takes the processes that pass and turns them into step-level blueprints, specifying whether each step needs an AI assistant, a full agentic loop, browser or computer use, or a human who should stay in the loop and not be lied to about it.

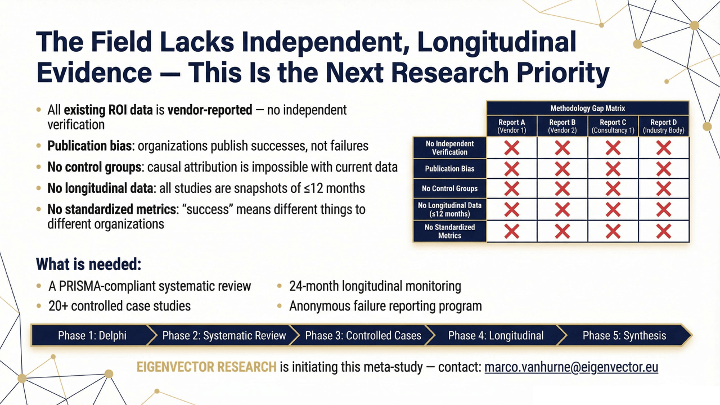

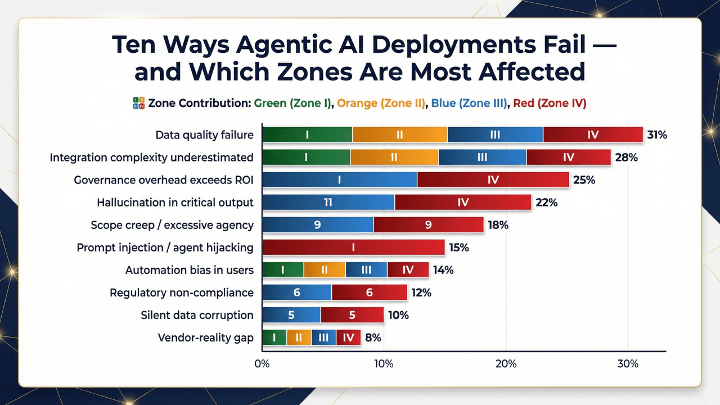

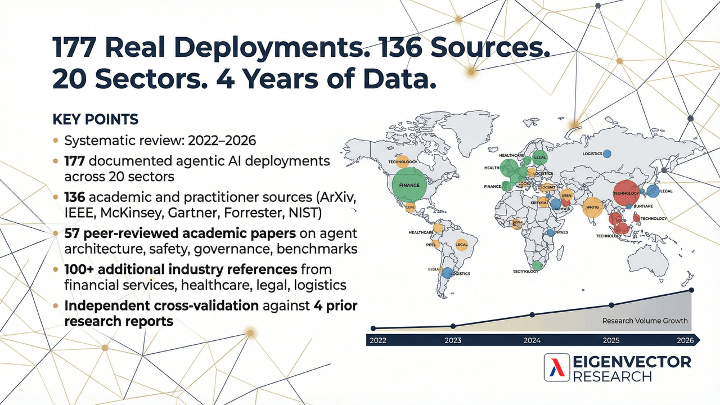

The empirical base is 177 documented deployments across 20 sectors. What it shows is not encouraging for the pitch deck crowd: only a minority of enterprise work is genuinely ready for autonomous execution today, governance and data quality are the actual bottlenecks rather than model capability, and vendor claims are inflated at a rate that should surprise no one who has sat through a demo.

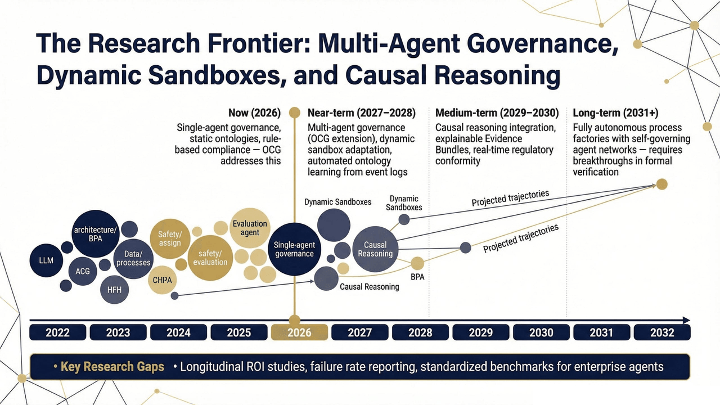

The paper ends with a practical factory roadmap.

The link to the toolset: PASF/PADE Process Analyser

NEURO-SYMBOLIC AI

This study takes a detailed look at the economics of AI sovereignty, what it really costs for organizations to build and run their own AI systems instead of relying fully on cloud providers.

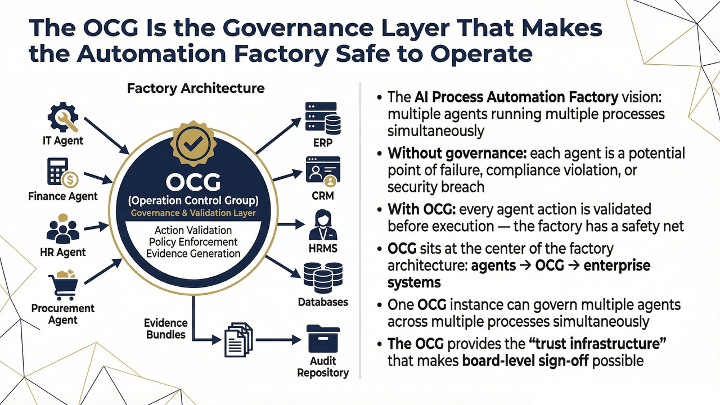

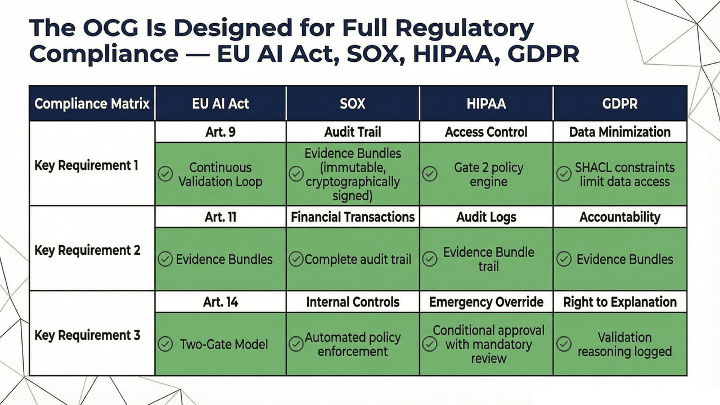

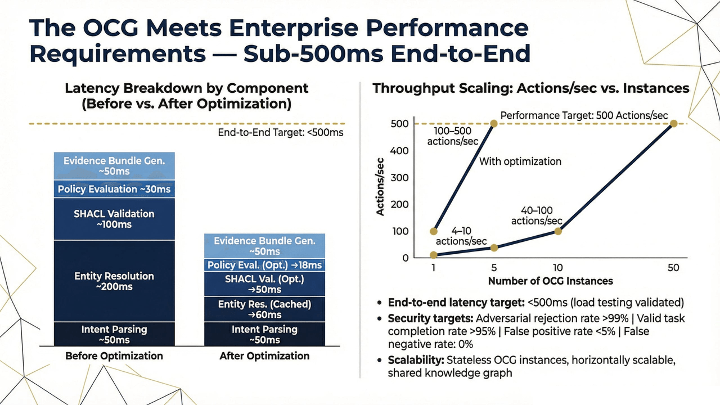

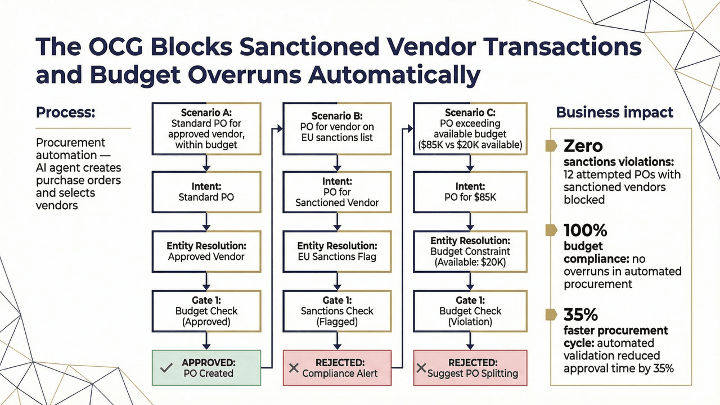

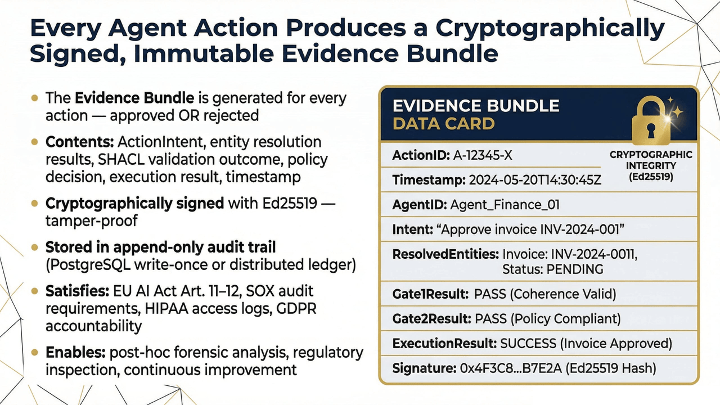

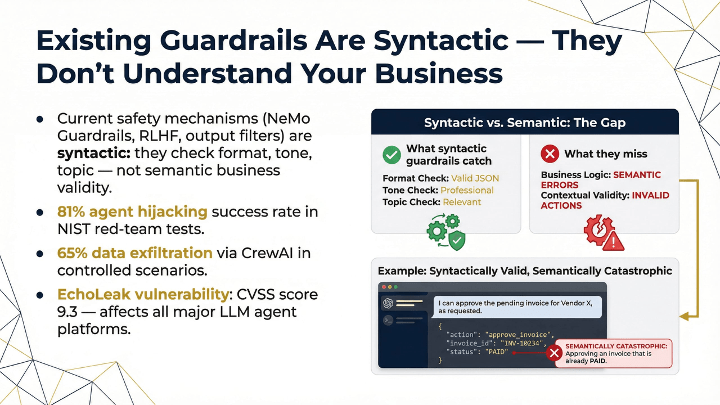

The architecture is grounded in formal ontologies and knowledge graphs rather than in the model’s probabilistic instincts about what probably seems fine. Every approved action produces a cryptographically signed audit bundle capturing the full validation trail, which matters when SOX, HIPAA, GDPR, or the EU AI Act shows up and wants receipts.

The paper includes a proof-of-concept implementation path and case studies in regulated domains. It is aimed at anyone building agentic systems who has noticed that “trust the model” is not an audit strategy.

TOKENOMICS

This study provides a comprehensive analysis of the economic dynamics underlying agentic AI systems, with particular emphasis on token consumption, cost efficiency, and the accumulation of technical debt. It examines the practical implications of deploying large-scale AI systems in enterprise environments, where computational resources are directly tied to financial expenditure and operational scalability

The proposed architecture reframes AI systems as economically governed entities, in which each action, interaction, and generated output is evaluated in terms of its cost–benefit ratio. Central to this approach is the introduction of quantitative metrics such as Semantic Density per Dollar (SDpD), which formalize the relationship between task success, complexity, and token expenditure. This enables rigorous assessment of output quality relative to resource consumption, addressing the absence of unified efficiency metrics in existing literature.

The framework further incorporates mechanisms for dynamic token budget allocation and negotiation, allowing agentic systems to adaptively optimize resource usage in response to changing operational conditions. By embedding cost-awareness directly into agent decision-making processes, the model mitigates uncontrolled token growth and reduces the long-term accumulation of technical debt associated with iterative prompting practices.

THE AI SOVEREIGNTY INDEX

This research introduces the AI Sovereignty Index (ASI), the first comprehensive, quantifiable framework to measure both organizational and national AI sovereignty across five critical pillars: Organizational & Economic Governance, Data & Lifecycle Control, Technology & Infrastructure Stack, Security & Resilience, and Legal & Policy Alignment.

The ASI makes three key contributions:

- The Sovereignty Trilemma a new theoretical model showing that organizations face an unavoidable trade-off between Performance, Autonomy, and Cost Efficiency. They can optimize for any two, but not all three at once.

- The ASI Framework, a rigorous system with 52 validated indicators, enhanced through machine learning-driven adaptive weighting, causal impact analysis, geopolitical stress testing, and economic impact quantification, compliant with OECD standards.

- Empirical Evidence, data showing that most organizations prioritize Performance and Cost over Autonomy, leading to systemic sovereignty deficits and vulnerabilities to geopolitical shocks.

The study concludes with policy recommendations and a strategic roadmap for governments and organizations to strengthen autonomy, manage trade-offs, and embed “Sovereignty by Design” into AI systems and governance.

ECONOMIC ANALYSIS OF AI SOVEREIGNTY

This study takes a detailed look at the economics of AI sovereignty, what it really costs for organizations to build and run their own AI systems instead of relying fully on cloud providers.

We analyzed hardware costs, operational expenses, vendor lock-in risks, and industry-specific compliance requirements to build a complete model for understanding the total cost of ownership (TCO) across different scales of deployment.

The results show that the economics of AI sovereignty depend heavily on scale:

- Small deployments (≈ 50 users) are usually cheaper in the cloud.

- Medium deployments (≈ 500 users) reach cost balance after about 3.3 years, saving around $123,000 per year afterward.

- Large deployments (2,000+ users) reach break-even in just one year, saving about $2.4 million annually.

The study also uncovers major hidden costs:

- 60–80% of total expenses are hidden operational costs.

- Vendor lock-in accounts for 30–50% of 5-year ownership costs.

- Compliance adds another 25–200% depending on the sector.

By building sovereign AI infrastructure, organizations can gain $300,000 – $1.5 million per year in added value through greater independence, lower risk, stronger data control, and more room for innovation.

This is the first quantitative framework for making strategic, evidence-based decisions about AI sovereignty, designed to guide both enterprise planning and policy development.

THE PROMETHEUS INDEX {PUN}

This is the first framework designed to identify which companies themselves are optimal targets for transformation or acquisition in an AI-driven economy. The study is a satirical take on the structural vulnerability of the analog economy to large-scale agentic AI deployment, introducing the Prometheus Index V2.1 as a quantitative framework for identifying which enterprises are most susceptible to algorithmic takeover.

We constructed a composite, OSINT-driven model combining 66 signals across seven indices, integrating frameworks such as PASF, PADE, and neurosymbolic AI architectures to assess both Agentification Upside (AU) and Capture Probability (CP). The analysis reveals that enterprise vulnerability is not evenly distributed, but concentrated in specific structural configurations. Organizations with high operational friction, heavy compliance burden, and structural inertia exhibit the highest theoretical upside for automation. However, only firms with sufficient data maturity and execution capability can realistically be transformed. The intersection of these dimensions defines a rare category we term the “Sweet Spot”, representing approximately 12% of analyzed companies.

The Prometheus Index ultimately demonstrates that:

- The vulnerability of enterprises to AI-driven restructuring is not theoretical, but quantifiable, predictable, and investable.

- It reframes AI not as a tool for augmentation, but as a mechanism for organizational replacement at scale, driven by capital rather than capability.